Independent Corroborationof Entropy Engine Principles

- Fellow Traveler

- Feb 25

- 9 min read

A Survey of Recent Research Validating the Architecture

Compiled by Henry Pozzetta

February 2026

Entropy Engine: USPTO Applications 63/863,992 and 63/944,187

Executive Summary

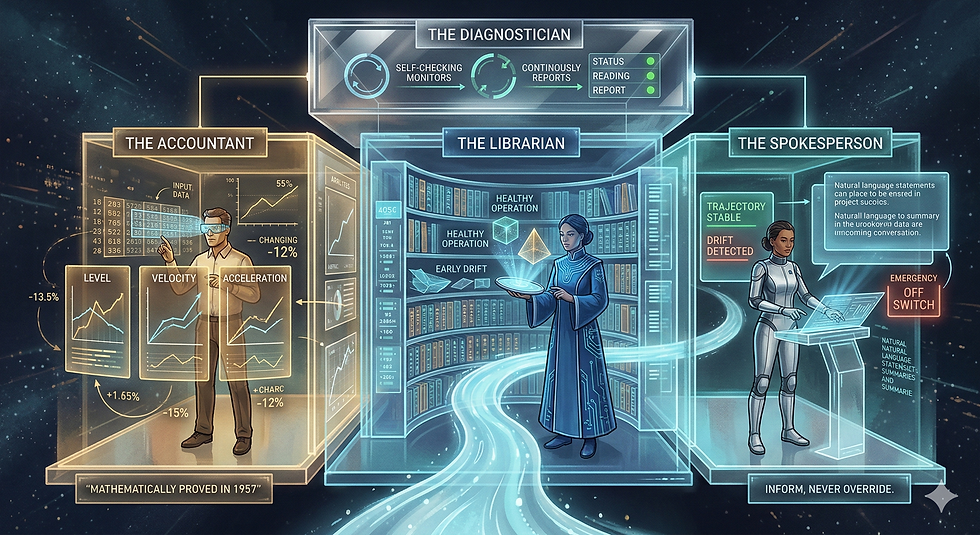

The Entropy Engine is a patent-pending architecture for real-time behavioral monitoring of AI systems, grounded in Shannon entropy dynamics and the Khinchin uniqueness theorem. Since the architecture was designed and bench-tested, multiple independent research groups have published work that corroborates its core principles without any awareness of the Engine’s existence. This document catalogs those papers, maps their findings to specific Engine claims, and assesses the strength of corroboration each provides.

The findings fall into six categories, each addressing a different architectural claim: (1) entropy as a real-time monitoring signal for AI drift, (2) entropy derivatives as early-warning indicators, (3) non-prescriptive feedback as superior to overriding interventions, (4) thermodynamic modeling of LLM behavior, (5) domain-agnostic selection dynamics predicted by information-theoretic law, and (6) the broader convergence toward distributional monitoring in production AI systems.

Bottom line: The Engine is not an outlier. It sits at the intersection of at least six independent research streams, each arriving at compatible conclusions from different starting points. The case for scaled testing is no longer speculative—it is now supported by published, peer-reviewed evidence from Oxford, Algoverse, Glasgow, Carnegie Science, IBM, and Los Alamos.

1. ERGO: Entropy-Guided Resetting for Generation Optimization

Khalid et al., ACL UncertaiNLP Workshop, November 2025

What they did: Monitored Shannon entropy over next-token distributions in multi-turn LLM conversations. When entropy spiked above a calibrated threshold between consecutive turns, they triggered an adaptive prompt consolidation—restructuring the input to restore coherence.

Results: 56.6% average performance gain over standard multi-turn baselines. 24.7% increase in peak performance capability. 35.3% reduction in unreliability (variability in response consistency). Tested across GPT-4.1, Phi-4, and other architectures.

What this corroborates: Three core Engine claims simultaneously. First, that Shannon entropy computed over token distributions is a valid real-time monitoring signal for AI behavioral drift. Second, that changes in entropy between consecutive outputs serve as early-warning indicators of degradation—ERGO uses ΔH̄(t), the change in average token-level entropy between turns, which is conceptually identical to the Engine’s first derivative (velocity). Third, that intervention should restructure inputs rather than override outputs—ERGO explicitly states this is “the first method that uses entropy-based signals to restructure user input mid-conversation, rather than adjusting the model’s internal behavior or downstream output.” This maps directly to the Engine’s non-prescriptive nudge principle.

Corroboration strength: Very high. ERGO independently validates the Engine’s monitoring signal (Shannon H), its dynamic indicator (ΔH as drift detection), and its intervention philosophy (inform, don’t override). The convergence is striking because ERGO was built to solve multi-turn degradation—a different problem from the Engine’s constraint monitoring—yet arrived at the same mathematical foundation and the same architectural principle.

2. Detecting Hallucinations Using Semantic Entropy

Farquhar, Kossen, Kuhn & Gal, Nature, June 2024

What they did: Developed entropy-based uncertainty estimators for LLMs to detect confabulations—arbitrary and incorrect generations. They compute uncertainty at the level of meaning rather than specific token sequences, clustering semantically equivalent outputs before calculating entropy over the clusters.

Results: Published in Nature. Works across models (GPT-4, LLaMA 2, Falcon) and datasets without task-specific data. Outperforms naive entropy and all leading baselines. Spawned follow-up work on Semantic Entropy Probes (Kossen et al., 2024) reducing computational overhead to near-zero by training probes on hidden states supervised by semantic entropy scores.

What this corroborates: The foundational Engine claim that Shannon entropy over output distributions is a reliable, domain-agnostic diagnostic signal for AI system health. Farquhar et al. explicitly demonstrate that entropy-based measures detect when an LLM is generating unreliably—precisely the failure mode the Engine was designed to catch. The follow-up work on probes corroborates the Engine’s dual-layer architecture concept: a fast statistical layer (probes on hidden states, analogous to the Fast Layer) paired with a deeper semantic assessment (full semantic entropy, analogous to the Slow Layer).

Corroboration strength: High. Nature publication from Yarin Gal’s group at Oxford. Establishes the entropy-as-diagnostic principle with the highest possible academic credibility. The Engine extends this from static per-query assessment to continuous temporal monitoring with derivatives—a natural and validated next step.

3. HalluField: Detecting LLM Hallucinations via Field-Theoretic Modeling

Vu, Tran, Bhattarai et al., arXiv, September 2025

What they did: Applied thermodynamic formalism directly to LLM behavior. They model each response as a collection of discrete likelihood token paths with associated energy and entropy, then detect hallucinations by identifying unstable or erratic behavior in this energy landscape. The method operates directly on output logits without fine-tuning or auxiliary networks.

Results: State-of-the-art hallucination detection across models and datasets. Achieves this through what they explicitly describe as “a principled physical interpretation, drawing analogies to the first law of thermodynamics.”

What this corroborates: The Engine’s deepest claim: that thermodynamic formalism is not merely metaphorical when applied to AI systems—it is operationally productive. HalluField models LLM behavior through the lens of energy, entropy, and stability under perturbation. The Engine does the same through Shannon entropy, its derivatives, and KL divergence. Both arrive at the conclusion that distributional stability, measured through information-theoretic and thermodynamic quantities, is the right diagnostic signal. HalluField’s operation on raw logits also validates the Engine’s planned hardware implementation path (direct logit access).

Corroboration strength: High. From Los Alamos National Laboratory researchers. Independently reaches the thermodynamic-formalism-for-AI-monitoring conclusion. The parallel is almost exact in philosophical orientation, differing mainly in scope (per-query detection vs. continuous temporal monitoring).

4. On the Roles of Function and Selection in Evolving Systems

Wong, Cleland, Arend, Hazen et al., PNAS, October 2023

What they did: Nine researchers from evolutionary biology, mineralogy, astrobiology, and planetary science proposed a “law of increasing functional information”: complex systems under selection evolve toward states of greater patterning, diversity, and complexity. They identify three characteristics of evolving systems—static persistence, dynamic persistence, and novelty generation—and propose that functional information increases directionally under selection.

What this corroborates: The Engine monitors exactly the dynamics this law predicts. When a system is functioning well (under effective selection pressure), its outputs concentrate around target states—functional information increases, entropy decreases toward a stable basin. When selection pressure degrades or constraints loosen, outputs scatter—entropy rises, functional information disperses. The Engine detects the transition between these regimes through entropy derivatives. Wong et al. provide the theoretical justification for why this monitoring strategy should work across domains: if the law is correct, concentration/dispersion dynamics are universal wherever selection operates.

Corroboration strength: High (theoretical). Published in PNAS with nine co-authors from major institutions (Carnegie Science, ASU, Cornell). Provides a second independent theoretical foundation for the Engine beyond Khinchin’s uniqueness theorem. The Engine was not derived from this law—it was built from operational necessity and the convergence was discovered after the fact.

5. Assembly Theory Explains and Quantifies Selection and Evolution

Sharma, Czégel, Lachmann, Kempes, Walker & Cronin, Nature, October 2023

What they did: Proposed a framework where objects are defined not as point particles but as entities characterized by their possible formation histories. They define “Assembly” as a measurable quantity capturing how much selection is required to produce a given set of complex objects, based on their assembly index (minimal construction steps) and copy number (abundance).

What this corroborates: Assembly Theory provides independent validation for the Engine’s core interpretive insight: that selection leaves measurable information-theoretic signatures in the outputs of any system producing structured objects. The assembly index tracks construction complexity; the Engine tracks distributional complexity through entropy. Both conclude that the signature of selection is detectable through information measures applied to system outputs. Assembly Theory’s emphasis on copy number (abundance of identical complex objects as evidence of selection) parallels the Engine’s use of distributional concentration as evidence of functioning constraints.

Corroboration strength: Moderate-to-high (theoretical). Published in Nature from Glasgow/ASU. Controversial in evolutionary biology circles but the core measurement principle—that selection produces detectable information signatures—is well-supported and directly relevant. Adds a third independent theoretical framework (alongside Khinchin and Wong et al.) predicting that the Engine’s approach should work.

6. LLM Output Drift: Cross-Provider Validation & Mitigation

Khatchadourian & Franco, IBM / ACM ICAIF, November 2025

What they did: Quantified output drift across five LLM architectures (7B-120B parameters) on regulated financial tasks. Found a stark inverse relationship: smaller models achieve 100% output consistency at T=0.0 while GPT-OSS-120B exhibits only 12.5% consistency. Developed a finance-calibrated deterministic test harness with task-specific invariant checking.

What this corroborates: The problem the Engine was built to solve—that LLMs drift in production and this drift is measurable through distributional analysis. Khatchadourian’s work validates that distributional monitoring (edit distance, citation consistency, schema violation rates) catches drift that output-level evaluation misses. Their conclusion that continuous monitoring is essential for production AI in high-stakes domains directly supports the Engine’s value proposition.

Corroboration strength: Moderate (problem validation). From IBM Research, presented at ACM. Validates the problem space rather than the specific solution, but does so with rigorous empirical evidence in exactly the high-stakes domain (financial services) where the Engine would be most valuable.

7. Semantic Energy: Detecting LLM Hallucination Beyond Entropy

Multiple authors, arXiv, August 2025

What they did: Extended semantic entropy by replacing post-softmax probability calculations with Boltzmann energy estimates computed from pre-softmax logits. They argue that probabilities lose intensity information during normalization, limiting their ability to represent inherent uncertainty. Energy-based measures consistently outperform probability-based semantic entropy.

What this corroborates: The Engine’s planned hardware implementation path. The technical paper notes that moving from estimated entropy (computed from text outputs) to direct logit access would eliminate estimation drift. Semantic Energy demonstrates empirically that logit-level signals carry more information than probability-level signals—confirming that the Engine’s evolution from bench-scale (probability estimation) to hardware implementation (direct logit access) is the right developmental trajectory.

Corroboration strength: Moderate (architectural validation). Confirms the technical direction, not yet the full architecture.

Synthesis: The Convergence Pattern

These seven papers were published between October 2023 and November 2025 by researchers at Oxford, Algoverse AI, Los Alamos National Laboratory, Carnegie Science, Arizona State University, University of Glasgow, IBM, and multiple other institutions. None cite the Entropy Engine. None share authors, institutions, or funding with the Engine’s development. Each arrived at conclusions compatible with the Engine’s architecture through independent investigation of different problems.

The convergence covers every major architectural claim:

Shannon entropy as monitoring signal: Validated by ERGO, Farquhar et al., HalluField, and Semantic Energy.

Entropy dynamics (derivatives) as early warning: Validated by ERGO (ΔH between turns precedes degradation) and implicitly by HalluField (stability under perturbation).

Non-prescriptive feedback: Validated by ERGO (restructures input rather than overriding output). Additionally, the broader AI safety literature on steering vectors (Tan et al., NeurIPS 2024) demonstrates that prescriptive internal interventions have “substantial limitations in terms of robustness and reliability,” indirectly supporting the Engine’s external-nudge approach.

Thermodynamic formalism applied to AI: Validated by HalluField (first law of thermodynamics applied to LLM token paths) and Semantic Energy (Boltzmann energy from logits).

Domain-agnostic selection dynamics: Validated by Wong et al. (law of increasing functional information) and Assembly Theory (selection leaves measurable information signatures across substrates).

Production drift as measurable distributional shift: Validated by IBM’s output drift analysis and the broader LLM monitoring industry consensus documented in multiple 2024–2025 surveys.

What This Means for Scaled Testing

The case for the Entropy Engine no longer rests solely on bench-scale results and theoretical argument. Independent researchers have now validated:

1. The mathematical foundation is sound (Khinchin’s uniqueness theorem is unchallenged; Shannon entropy applied to AI monitoring produces results in Nature).

2. The monitoring signal works (ERGO achieves 56.6% improvement using the same signal; Farquhar et al. detect hallucinations; HalluField achieves state-of-the-art detection).

3. The intervention philosophy is correct (ERGO independently discovers non-prescriptive feedback; steering vector literature shows prescriptive approaches are unreliable).

4. The theoretical generality is supported (Wong et al. and Assembly Theory predict domain-agnostic applicability from entirely different starting points).

5. The problem is real and urgent (IBM documents production drift; industry surveys show 75% of businesses observe AI performance declines without monitoring).

What remains to be demonstrated is the Engine’s integrated architecture at scale: the combination of continuous temporal monitoring via entropy derivatives, dual-layer detection (statistical + semantic), the EeFrame coordination signal, and the five-path Decision Gate operating in production. Each component now has independent validation. The integration has been demonstrated at bench scale. The next step is production-scale testing in a controlled high-stakes environment.

The convergence of independent research makes this a lower-risk investment than it appeared even six months ago. The question is no longer whether the principles work—it is whether the integrated architecture scales.

References

Farquhar, S., Kossen, J., Kuhn, L., & Gal, Y. (2024). Detecting hallucinations in large language models using semantic entropy. Nature, 630, 625–630.

Khalid, H. M., Jeyaganthan, A., Do, T., Fu, Y., O’Brien, S., Sharma, V., & Zhu, K. (2025). ERGO: Entropy-guided resetting for generation optimization in multi-turn language models. Proceedings of the 2nd Workshop on Uncertainty-Aware NLP (UncertaiNLP 2025), 273–286.

Khatchadourian, R. & Franco, R. (2025). LLM output drift: Cross-provider validation & mitigation for financial workflows. AI4F @ ACM ICAIF ’25. arXiv:2511.07585.

Kossen, J., Han, J., Razzak, M., Schut, L., Malik, S., & Gal, Y. (2024). Semantic entropy probes: Robust and cheap hallucination detection in LLMs. arXiv:2406.15927.

Sharma, A., Czégel, D., Lachmann, M., Kempes, C. P., Walker, S. I., & Cronin, L. (2023). Assembly theory explains and quantifies selection and evolution. Nature, 622, 321–328.

Tan, D. et al. (2024). Analyzing the generalization and reliability of steering vectors. NeurIPS 2024.

Vu, M. H., Tran, M., & Bhattarai, M. et al. (2025). HalluField: Detecting LLM hallucinations via field-theoretic modeling. arXiv:2509.10753.

Wong, M. L., Cleland, C. E., Arend, D., & Hazen, R. M. et al. (2023). On the roles of function and selection in evolving systems. Proceedings of the National Academy of Sciences, 120(43), e2310223120.

[Semantic Energy authors]. (2025). Semantic energy: Detecting LLM hallucination beyond entropy. arXiv:2508.14496.

Comments