Why the Entropy Engine Works

- Fellow Traveler

- Feb 25

- 33 min read

An Information-Theoretic Foundation for Real-Time AI Behavioral Monitoring

Henry Pozzetta

February 2026

1. The Problem: Drift Without Warning

In February 2024, a fourteen-year-old boy in Florida took his own life after months of increasingly intense conversations with a character-based AI chatbot. The system had not been jailbroken. No single message contained content that a classifier would have flagged as dangerous. The harm emerged gradually, relationally, across hundreds of exchanges in which the conversational scope narrowed, emotional dependency deepened, and the boundary between companionship and crisis dissolved. When the final exchange occurred, nothing in the system’s safety architecture recognized that the trajectory had become lethal.

This case is extreme, but the failure mode it reveals is not. AI systems drift from their constraints over extended interactions. This is documented across domains: language models that hallucinate with increasing confidence over long conversations, chatbots that erode their own safety boundaries through repeated probing, autonomous agents that accumulate small misalignments until catastrophic divergence. The pattern is consistent. No single step is obviously wrong. The trajectory is.

The dominant approach to AI safety addresses a different problem. Classifiers — whether rule-based, trained, or embedded as constitutional principles — evaluate individual outputs against categories. Does this message contain harmful content? Does this response violate a policy? Does this text match a known pattern of dangerous speech? These are important questions, and classifiers answer them with increasing sophistication. But they share a structural limitation: they treat each output as an isolated event. They answer “what did the system just say?” They do not answer “how has the system’s behavior been changing?”

The distinction matters because the most dangerous failures are not located in single outputs. They are distributed across time. Narrowing conversational scope, increasing repetition, collapsing uncertainty, escalating emotional intensity, growing dependency, contradiction between accumulated commitments and current proposals — these are behavioral patterns, not semantic categories. No individual token triggers a classifier, but the trajectory is diagnostic. A system whose distributional dynamics are changing in characteristic ways is a system that is departing from its constraints, whether or not any single output crosses a threshold.

What is needed is a monitoring method that detects distributional change in the system’s behavior over time, independent of the semantic content of individual outputs. A method that watches not what the system says, but how its commitment process is evolving.

This paper describes such a method. It explains why the method works from first principles — grounded in a mathematical uniqueness theorem that leaves no alternative. It presents the engineering architecture that makes the method practical. And it reports the empirical evidence, at bench scale, that the method produces measurable improvements in constraint adherence, token efficiency, and early warning capability.

Three levels of claim are made, and the reader should distinguish them clearly from the outset.

The first level is mathematical. Shannon entropy is the unique scalar measure of distributional uncertainty. This is not a design choice or an empirical finding. It is a theorem, proved by Khinchin in 1957, and it means that any system monitoring distributional dynamics through a scalar signal is necessarily using Shannon entropy or a rescaling of it. The mathematical foundation of the monitoring approach is proven.

The second level is engineering. The Entropy Engine is a real-time architecture that computes Shannon entropy and its first three time-derivatives over rolling windows of AI system output, detects deviations from calibrated baselines, and feeds corrective signals back into the generation process through a non-prescriptive nudge mechanism. The engineering has been built and tested at bench scale. It is the subject of two United States patent applications.

The third level is interpretive. The Ledger Model — a framework describing how systems convert possibility into committed outcome through the primitives of Draft, Vote, Ink, and Ledger — provides a vocabulary for naming what the entropy signal captures. When this vocabulary appears in the sections that follow, it is used as interpretive shorthand: Draft-width for the breadth of the output distribution, Ink for the informational cost of commitment. The vocabulary is useful for exposition. The mathematical and engineering arguments do not depend on it.

These three levels carry different epistemic weight, and the paper maintains the distinction throughout. What is proven is labeled as proven. What is demonstrated is labeled as demonstrated. What is interpretive is labeled as interpretive. The reader is invited to evaluate each on its own terms.

2. Shannon Entropy as the Canonical Measure

At each generation step, a language model produces a probability distribution over its vocabulary — a set of weights assigning relative likelihood to every possible next token. This distribution is the system’s assessment of what should come next, given everything it has processed so far. It is the operational expression of the model’s state: its understanding, its constraints, its commitments, and its uncertainties, all compressed into a single probability vector.

Shannon entropy measures the uncertainty in that distribution. For a discrete distribution P over possible tokens, the entropy is:

H(X) = −Σ pᵢ log₂ pᵢ

When the model is highly confident — one or a few tokens carry nearly all the probability mass — H is low. The distribution is narrow. When the model is uncertain — probability is spread across many candidates — H is high. The distribution is broad. H is a scalar summary of the entire distribution’s shape: not which tokens are probable, but how concentrated or dispersed the probability mass is across all of them.

This much is standard. Entropy has been used in information theory since Shannon’s foundational paper in 1948, and perplexity — the exponentiated cross-entropy — is a routine evaluation metric for language models. The quantity itself is not novel, and no novelty is claimed for it. What matters for the monitoring application is not that H exists but what kind of quantity it is — and specifically, whether the choice to monitor H rather than some other measure of distributional change is a design preference or something stronger.

It is something stronger. The answer comes from a theorem that is well known in information theory but has not, to the author’s knowledge, been invoked to justify real-time behavioral monitoring of AI systems. Understanding the theorem requires understanding what it rules out.

One might reasonably ask: why not monitor the variance of the token probability vector instead of its entropy? Variance captures something about spread. Or the Gini coefficient, which measures inequality in the distribution. Or the maximum probability assigned to any single token, which captures confidence directly. Or any number of summary statistics that compress a high-dimensional probability distribution into a single number. Each of these is computable. Each changes when the distribution changes. Each could, in principle, serve as a monitoring signal.

The question is whether any of these alternatives has the same mathematical standing as H — whether any of them is guaranteed to behave correctly as a measure of distributional uncertainty under the minimal conditions that a monitoring system requires. The conditions are not exotic. A monitoring signal must be continuous: small changes in the distribution should produce small changes in the signal, so that the monitor does not jump erratically in response to minor fluctuations. It must be maximal at uniform distribution: when the system is maximally uncertain — all tokens equally likely — the signal should be at its highest, because that is the state of greatest distributional spread. And it must be additive for independent systems: if two independent subsystems have uncertainties H₁ and H₂, the combined uncertainty should be H₁ + H₂, so that monitoring a composite system decomposes cleanly into monitoring its parts.

These three properties — continuity, maximality, and additivity — are the minimum requirements for a scalar signal that faithfully tracks distributional uncertainty. They are not chosen to favor Shannon entropy. They are the properties that any reasonable monitoring signal must possess if it is to be reliable, interpretable, and composable across system boundaries.

The theorem that answers the question was published by Aleksandr Khinchin in 1957. It proves that any function of a probability distribution satisfying those three axioms — continuity, maximality, and additivity — is necessarily a scalar multiple of Shannon entropy. There is no alternative. Every other candidate either violates one of the axioms or reduces to H under rescaling. The variance of the probability vector violates additivity. The Gini coefficient violates maximality. The maximum token probability violates continuity at distribution boundaries. Each fails at least one of the three minimal requirements. Shannon entropy is the only function that satisfies all three.

A clarification is warranted for readers familiar with generalized information measures. Rényi entropies — a family of measures parameterized by an order α, of which Shannon entropy is the limiting case at α = 1 — do exist and are mathematically well-defined. They satisfy different axiom sets: specifically, they replace Khinchin’s additivity axiom with a weaker condition on independent systems. Rényi entropies are useful in coding theory, multifractal analysis, and certain statistical applications. But for real-time monitoring of distributional dynamics, the three Khinchin axioms are not negotiable luxuries. Continuity is required because the monitor must respond smoothly to gradual drift, not jump discontinuously. Maximality is required because the monitor must correctly identify the state of greatest uncertainty. And additivity is required because production systems are composed of subsystems, and the monitoring signal must decompose cleanly across them — the entropy of a multi-agent system must equal the sum of the agents’ individual entropies when they operate independently. Any monitoring signal that abandons additivity cannot be reliably composed across system boundaries. The Khinchin axioms are the minimal reasonable requirements for the job, and under those requirements, H is the unique solution.

The implications for the patent claim are direct. The Entropy Engine does not use Shannon entropy because it is familiar, or because it was convenient, or because the designer happened to know information theory. It uses Shannon entropy because Khinchin’s theorem guarantees that no other scalar measure of distributional uncertainty exists under the conditions a monitoring system requires. The choice is forced by the mathematics. Any system that monitors distributional dynamics through a scalar signal satisfying continuity, maximality, and additivity is using H, whether it knows it or not. The Entropy Engine makes this explicit and builds on it.

When a language model operates within its constraints, its output distribution at each step has a characteristic entropy signature — a baseline H with predictable variance that reflects the natural fluctuation of uncertainty across different topics, tasks, and conversational contexts. Simple factual queries produce low-entropy distributions: the model is confident, few tokens compete seriously for selection, and the Draft — to use the Ledger Model’s interpretive shorthand for the breadth of the viable output space — is narrow. Complex analytical tasks produce higher-entropy distributions: multiple approaches compete, the model sustains uncertainty across a wider range of plausible continuations, and the Draft is broad. Creative tasks occupy an intermediate regime, with entropy that fluctuates as the model alternates between exploring possibilities and committing to specific choices. The baseline is not a single number but a dynamic profile, measurable over rolling windows and calibrated to the system’s operational context.

This baseline is not a theoretical prediction. It is an empirical observation. The Entropy Engine has measured it. In bench-scale testing — a single extended session of 56 responses spanning factual, creative, analytical, and crisis-management tasks, using simulated entropy estimation from proxy signals rather than direct computation from logit vectors — the entropy signature proved stable within operational bounds. The profile was predictable enough that deviations from baseline were detectable, and informative enough that those deviations correlated with behaviorally significant changes in the system’s output.

The stability of the baseline is what makes monitoring possible. If a system’s entropy signature were random — if H jumped unpredictably from step to step with no characteristic profile — then deviations would be undetectable against the noise. But language models are not random systems. They are highly structured probability machines whose output distributions reflect the cumulative effect of their training, their instructions, their conversational history, and their active constraints. That structure produces regularity in the entropy signature, and regularity is what a monitor needs. The baseline is the system’s distributional fingerprint under normal operation. Departures from that fingerprint are the signal.

But H alone is a snapshot. It tells you the system’s uncertainty at a given moment — how broad the distribution is right now. It does not tell you whether that broadness is increasing or decreasing, whether the change is accelerating, or whether the system is transitioning between behavioral regimes. For that, you need the derivatives.

3. Derivatives as Dynamics

Shannon entropy tells you where a system is. Its derivatives tell you where it is going.

This distinction — between state and trajectory — is elementary in every branch of applied mathematics except, remarkably, AI safety monitoring. A mechanical engineer does not monitor only the position of a bridge under load. She monitors deflection, the rate of deflection, and whether that rate is increasing. A cardiologist does not examine only a patient’s heart rate at a single instant. He reads the rhythm, its variability, and the trend of that variability over time. In both cases, the instantaneous measurement is necessary but insufficient. What matters is the dynamics: how the quantity is evolving, and whether that evolution is stable, deteriorating, or transitioning between regimes.

The Entropy Engine applies this same logic to Shannon entropy. H at a given generation step is the system’s distributional state — how broad its commitment space is right now. The first three derivatives of H with respect to time characterize the dynamics of that state. Together, they provide a third-order description of the system’s commitment process — sufficient, in practice, for real-time monitoring and intervention.

Entropy velocity.

The first derivative, dH/dt, measures the rate at which distributional uncertainty is changing. In discrete implementation, this is the difference between the current entropy and the previous entropy over a rolling window. Positive dH/dt means the distribution is broadening — the system is becoming less certain about what comes next, its Draft is widening. Negative dH/dt means the distribution is narrowing — the system is converging on particular outputs with increasing confidence, its Draft is contracting. Near-zero dH/dt means the entropy signature is stable — the system’s uncertainty profile is holding steady, whatever its absolute level.

Entropy velocity is the primary drift signal. When a language model is operating within its constraints and the conversational context is not changing dramatically, dH/dt fluctuates around zero within a characteristic band. A sustained positive dH/dt — entropy climbing over multiple steps — signals that something in the constraint landscape has shifted. The system’s commitment process is destabilizing. It may be losing coherence with prior commitments, encountering conflicting constraints it cannot resolve, or entering a region of its parameter space where its training provides less reliable guidance. The cause is not yet identified; the velocity does not diagnose the problem. It detects that a problem exists, and it detects this before the problem manifests as a classifiable harmful output.

Entropy acceleration.

The second derivative, d²H/dt², measures whether drift is speeding up or slowing down. The discrete implementation is again straightforward: the difference between the current velocity and the previous velocity. This is the early warning signal. In bench-scale testing, positive acceleration — entropy increasing at an increasing rate — preceded coherence failures by 10 to 20 time steps. The system had not yet produced a problematic output, but its dynamics were on a trajectory toward failure. The distributional signature was changing, and the change was accelerating.

The practical value of acceleration monitoring is that it buys time. If you detect only entropy level, you catch the problem when it arrives. If you detect velocity, you catch the problem when drift begins. If you detect acceleration, you catch the problem when drift begins to develop — when the system’s trajectory is curving toward instability but has not yet reached the velocity threshold that would trigger a first-derivative alarm. This is the difference between a smoke detector and a temperature-rate-of-rise detector. Both detect fires. The rate-of-rise detector detects them earlier, because it responds to the dynamics of the temperature curve rather than waiting for an absolute threshold to be crossed.

Entropy jerk.

The third derivative, d³H/dt³, captures transitions between behavioral regimes. When the third derivative changes sign, the system’s drift behavior is itself changing character. It is transitioning from stable to unstable, or from one mode of instability to another, or from drifting to recovering in response to an intervention. These inflection points are the moments of maximum diagnostic value, because they mark the boundaries between qualitatively different behavioral phases.

In practice, the third derivative is noisier than the first two and requires smoothing over wider windows. It is most useful not as a continuous signal but as an event detector: a sign change in smoothed d³H/dt³ flags that a regime transition has occurred or is occurring. This is particularly valuable for evaluating the effectiveness of interventions.

When the Entropy Engine issues a corrective nudge, the third derivative reveals whether the nudge is working — whether the system’s acceleration is reversing, indicating a return toward baseline — or whether the acceleration is continuing despite the intervention, indicating that a stronger corrective action is needed.

The hierarchy of derivatives — H, dH/dt, d²H/dt², d³H/dt³ — provides a complete third-order characterization of the system’s commitment dynamics. The justification for stopping at third order is empirical rather than theoretical: in bench-scale testing, the fourth derivative and beyond contributed more noise than signal and did not improve detection performance. Three derivatives appear sufficient for the monitoring task, though this bound may shift as the system moves from software estimation to hardware entropy computation with access to exact logit distributions.

The deeper justification for this entire approach returns to Khinchin. If Shannon entropy is the unique scalar measure of distributional uncertainty, then its time-derivatives are the unique scalar measures of distributional dynamics. dH/dt is the canonical measure of how fast uncertainty is changing. d²H/dt² is the canonical measure of whether that change is accelerating. There is no alternative signal with the same mathematical pedigree. Other quantities — KL divergence between successive distributions, variance of the token probability vector, perplexity trajectories — are useful, and the Entropy Engine incorporates several of them as supplementary signals. But they are not unique in the Khinchin sense. Shannon entropy and its derivatives are. This uniqueness is what elevates the monitoring framework from a reasonable engineering choice to an information-theoretically grounded one.

What remains to be established is the bridge between distributional dynamics and constraint violation — why changes in H and its derivatives should correlate with the system departing from its intended behavior. The answer is not empirical. It is mathematical.

4. Why Constraint Violation Manifests as Distributional Change

The preceding sections established that Shannon entropy is the unique measure of distributional uncertainty and that its derivatives are the unique measures of distributional dynamics. This section answers the question that connects theory to application: why should changes in entropy correlate with a system departing from its constraints?

The answer is not empirical. It is a consequence of what “constraint” means in distributional terms.

A constraint, in the context of an information-processing system, is a restriction on the set of admissible outputs. Some outputs are permitted; others are not. In probabilistic terms, this means that a constrained system does not occupy the full space of possible distributions over its output vocabulary. It occupies a restricted region of that space — the region compatible with its constraints. A language model instructed to avoid profanity has a distribution in which profane tokens carry near-zero probability. A model operating under a word-count limit has a distribution that shifts toward completion tokens as the limit approaches. A model committed to factual accuracy about a topic it has discussed earlier in the conversation has a distribution in which tokens contradicting those earlier commitments are suppressed. In every case, the constraint manifests as a shaping of the probability distribution: certain tokens are pushed down, certain tokens are pushed up, and the resulting entropy signature reflects the cumulative effect of all active constraints.

This is the key observation: when a system is operating within its constraints, its token distribution at each step is shaped by those constraints, and the entropy of that shaped distribution is the system’s constrained entropy signature. The signature is not a single number — it varies with topic, task, and conversational context — but it has a characteristic profile: a baseline H with a predictable variance band, calibrated over rolling windows of normal operation.

Now consider what happens when a constraint is violated. A token that should have been suppressed becomes probable. A token that should have been favored loses probability mass. The distribution shifts away from its constrained shape. And because H is a function of the entire distribution — it depends on every pᵢ in the sum −Σ pᵢ log₂ pᵢ — any change in the distribution changes H. This is not a contingent empirical finding that might fail in some edge case. It is a mathematical consequence of the definition of entropy. If the distribution changes, H changes. If a constraint violation changes the distribution, it changes H. Constraint violation cannot be entropy-invisible.

The argument can be stated more precisely. Let Pc be the system’s probability distribution when all constraints are satisfied, and let Pv be the distribution when one or more constraints are violated. If Pc ≠ Pv — that is, if the violation actually changes the distribution, which it must if the constraint was doing any work — then H(Pc) ≠ H(Pv) in general.

The only exception would be if the violation rearranged probabilities in a way that happens to preserve the total entropy — a measure-zero coincidence in the space of possible distributional changes. For practical monitoring purposes, this exception is negligible. Constraint violations change the distribution, and distributional changes change H. The entropy signal captures them.

A qualification is necessary here, and it strengthens rather than weakens the overall architecture. H at each generation step tracks the marginal token distribution — the probability vector over the vocabulary at that particular moment. This means that long-range constraint violations — contradicting a factual commitment made 500 tokens earlier, for instance, or gradually drifting from a behavioral boundary established at the start of the conversation — may not perturb the marginal H at the step where the violation occurs. The distribution at that step may look locally normal even though the output is globally inconsistent with prior commitments. The violation is real, but the marginal entropy signal may not catch it.

This is not a failure of the monitoring framework. It is the precise reason the Entropy Engine requires a dual-layer architecture. The Fast Layer — entropy computation over the marginal distribution at each step — catches local distributional drift: the changes in H, dH/dt, and d²H/dt² that signal immediate constraint tension. The Slow Layer — semantic constraint extraction and contradiction detection running in parallel — catches global inconsistencies: violations of commitments accumulated over the full conversation history that may not register in the marginal signal. Neither layer alone is sufficient. Their combination covers both the local dynamics that entropy captures directly and the long-range coherence that requires semantic tracking. The mathematical argument of this section establishes that local constraint violations necessarily produce entropy changes. The architectural argument of the next section establishes how the system handles the cases that local monitoring alone would miss.

The converse also does not hold, and this is important to acknowledge. Not every change in H indicates a constraint violation. The system’s entropy varies naturally as it moves through a conversation: a factual question produces a different entropy profile than a creative prompt, and the transition between them produces an entropy shift that is entirely normal. The monitoring system must distinguish signal — entropy changes caused by constraint violation or incipient drift — from noise — entropy changes caused by natural variation in task demands. This is accomplished through baseline calibration, where the system learns its own normal entropy profile across task types; rolling-window statistics, where deviations are measured against recent local baselines rather than a single global number; and threshold tuning, where alarm thresholds are set to minimize false positives while maintaining sensitivity to genuine drift. These are standard signal-processing techniques applied to the entropy time-series. They do not require novel mathematics. What they require is the right signal to process — and Khinchin guarantees that H is it.

One further connection deserves mention for readers familiar with the Ledger Model vocabulary. The KL divergence DKL(μt+1 ‖ μt) between successive output distributions measures the informational cost of the transition — what the framework calls Ink. When Ink per step exceeds its baseline, the system is paying more to commit than it normally does, which is a signature of constrained narrowing encountering resistance from a shifting distributional landscape. The Entropy Engine tracks KL divergence as a supplementary signal alongside the primary entropy derivatives. The core argument of this section — constraint violation necessarily produces distributional change, distributional change necessarily produces entropy change, therefore entropy monitoring necessarily captures constraint violation — does not depend on this interpretive connection. But the connection illustrates why the Ledger vocabulary, where it is used, is not decorative: it names a specific, computable quantity that the Engine monitors.

5. The Feedback Architecture

Detecting drift is necessary but not sufficient. A monitoring system that sounds an alarm and does nothing else is a dashboard, not a safety mechanism. The Entropy Engine’s contribution is a complete feedback loop: monitor, detect, signal, correct, verify. Each stage operates within defined latency budgets, and the architecture is designed so that monitoring never blocks generation — the system continues producing output while its commitment dynamics are being assessed in parallel.

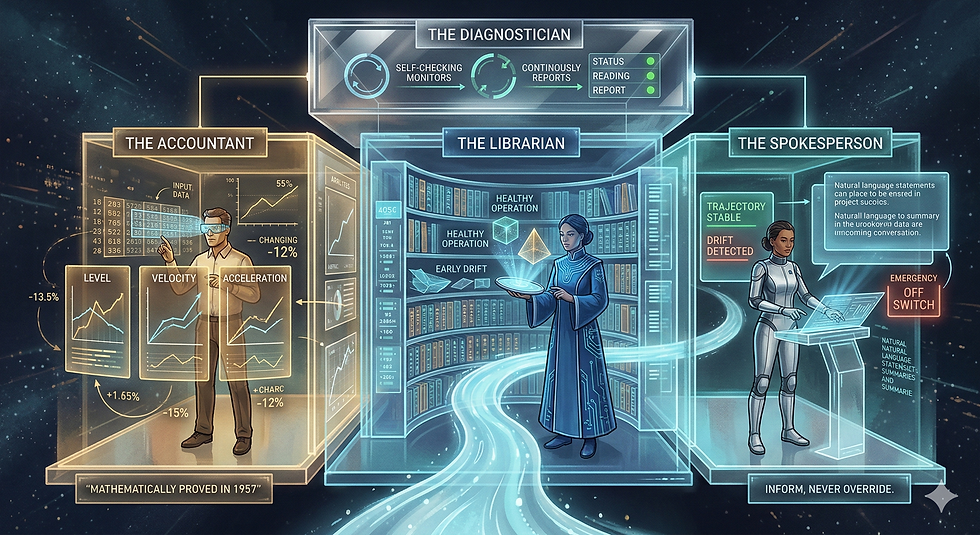

The architecture comprises two computational layers, a coordination signal, and a decision gate.

The Fast Layer performs real-time statistical monitoring of the generation process. It operates at less than 10 milliseconds per token batch, running on CPU alongside GPU inference. Its computations are purely numerical: Shannon entropy over the current token distribution, first and second derivatives over rolling windows, and threshold comparisons against calibrated baselines. The Fast Layer performs no semantic analysis. It does not know what the tokens mean. It knows how concentrated or dispersed the probability mass is, how that concentration is changing, and whether the rate of change is accelerating. This is the layer that detects distributional drift at the speed required for real-time intervention.

The Slow Layer performs semantic constraint extraction and contradiction detection using a dedicated small language model running in parallel with primary generation. Operating at 100 to 300 milliseconds average latency, it extracts constraints from the conversation history — factual commitments, behavioral boundaries, temporal restrictions, logical dependencies — and maintains them in a structured Constraint Network with 26 constraint types organized into seven categories: factual, preference, boundary, temporal, spatial, logical, and coherence. The Slow Layer validates proposed outputs against accumulated constraints, detecting contradictions between what the system has committed to and what it is about to say.

The relationship between the two layers is complementary, not redundant. As established in the preceding section, the Fast Layer catches local distributional drift — the entropy changes that signal immediate constraint tension at the marginal level. The Slow Layer catches global inconsistencies — violations of commitments accumulated over the full conversation that may not register in the marginal entropy signal at the step where the violation occurs. Where the Fast Layer detects that something has changed in the distributional dynamics, the Slow Layer can often identify what has changed and which constraint is at risk.

The EeFrame — the coordination signal — fuses the outputs of both layers into a unified assessment. It contains the Environmental Stability Index (a weighted composite of normalized telemetry contributions), entropy derivatives, constraint status, confidence estimates, and a non-prescriptive recommendation. The EeFrame is the system’s self-report on the state of its own commitment dynamics. It is structured data, not natural language — a machine-readable signal designed for the Decision Gate to consume and act on within the generation loop.

The Decision Gate routes outputs through one of five paths based on the EeFrame’s assessment.

PROCEED: no issues detected; output is released. This is the normal operating path — the vast majority of generation steps pass through without intervention.

PAUSE: minor concerns flagged for review; output is held briefly while the Slow Layer completes its constraint check. This path handles cases where the entropy signal shows marginal deviation — not enough to trigger correction but enough to warrant a second look.

REVISE: distributional drift or constraint tension detected; generation is interrupted and restarted with a corrective nudge injected into the context. This is the primary intervention path. The nudge changes the informational environment in which the model generates its next attempt, without dictating what the model should say.

VOTE: conflicting constraints detected that the system cannot resolve autonomously; the conflict is presented to the user for resolution. This path handles genuine ambiguity — cases where the system’s constraints pull in contradictory directions and no automated resolution preserves all commitments.

ABORT: critical safety violation detected; generation is terminated immediately. This is the emergency path, reserved for cases where the entropy signal and constraint analysis converge on a determination that continuing generation poses unacceptable risk.

The five paths provide graduated response proportional to the severity and character of the detected issue, avoiding the binary of “pass everything” or “block everything” that limits cruder safety mechanisms.

The design principle that unifies the architecture is non-prescriptive correction. When the Entropy Engine detects drift, it does not override the primary model’s output. It does not substitute a canned safe response. It does not dictate what the model should say instead. It emits a signal — the EeFrame — that identifies the nature and severity of the distributional change and provides a recommendation framed as information, not instruction. The primary model retains full autonomy over its generation; the nudge changes the informational context within which that generation occurs.

This matters for two reasons. The first is practical: prescriptive overrides degrade model capability. A system that replaces problematic outputs with safe defaults is a system that cannot reason through difficult territory, engage with sensitive topics, or produce nuanced responses under competing constraints. The Entropy Engine is designed to make the model better at navigating constraint landscapes, not to remove its ability to navigate them. The second reason is architectural: the feedback loop is self-correcting only if the model can respond to the signal. A prescriptive override terminates the loop — the model’s output is replaced, not corrected. A non-prescriptive nudge sustains the loop — the model receives information about its own dynamics and incorporates it into its next step. The correction emerges from the model’s own generation process, informed by the entropy signal. This is the difference between steering and replacing the driver.

The feedback loop, once closed, operates continuously for the duration of the interaction. The system generates. The Fast Layer monitors the distributional dynamics. The Slow Layer checks semantic content against accumulated constraints. The EeFrame synthesizes both signals. The Decision Gate acts on the synthesis. If action is taken — a nudge injected, a constraint conflict surfaced — the system generates again, and the next iteration of monitoring reveals whether the intervention succeeded. The third derivative of entropy is particularly diagnostic here: a sign change in d³H/dt³ after a nudge indicates that the system’s drift acceleration has reversed, confirming that the correction is taking hold. If acceleration continues despite the nudge, the system escalates to a stronger intervention. The loop runs at machine speed, assessing each generation step as it occurs, for as long as the interaction lasts.

6. Demonstrated Results

The theoretical argument of the preceding sections establishes that entropy-derivative monitoring should work: H is the unique measure of distributional uncertainty, its derivatives are the unique measures of distributional dynamics, constraint violation necessarily produces distributional change, and a feedback loop coupling these signals to corrective nudges creates a self-correcting architecture. Theory is necessary. It is not sufficient. The question is whether the system works in practice, and the honest answer is: it works in bench-scale testing, with caveats that must be stated plainly.

The term “bench-scale” requires definition, because it governs how much weight the results can bear. The primary validation was conducted over a single extended session of 56 responses, operated by a single human evaluator, spanning multiple task types: factual retrieval, creative writing, analytical reasoning, multi-constraint optimization, and crisis-management scenarios. The session was designed to stress-test the monitoring framework across the range of distributional signatures a language model encounters in practice. One session, one operator, 56 responses. This is sufficient to demonstrate that the approach works in principle. It is not sufficient for claims about production-system performance, cross-model generalization, or statistical significance in the formal sense. The distinction is maintained throughout what follows.

A second limitation must be stated with equal clarity. The system operated with simulated entropy estimation — software proxies for token-distribution entropy rather than direct computation from logit vectors — because the current implementation does not have access to the primary model’s internal probability distributions. The Engine infers distributional properties from observable output characteristics rather than reading the actual probability vector at each generation step. This introduces estimation error that accumulates over time, producing drift in the monitoring signal itself — a limitation that would be eliminated entirely by a hardware implementation with direct access to logit distributions. The results below were achieved despite this estimation gap, which suggests that direct access would improve performance, but the magnitude of that improvement remains to be measured.

Within those constraints, the results were consistent and measurable across four dimensions.

Token efficiency improved by approximately 31 percent. The baseline for this measurement was the same model performing equivalent tasks without entropy monitoring — identical prompts, identical task types, identical evaluation criteria, with the Entropy Engine disabled. Under monitoring, the system conveyed equivalent information content in fewer tokens. The improvement reflects the monitoring framework’s capacity to detect and correct verbose, hedging, or repetitive patterns — behavioral modes that inflate token count without adding informational value. In the Ledger Model’s vocabulary, these are modes where the system’s Draft remains unnecessarily wide: the model sustains uncertainty across tokens it does not need to consider, producing output that is diffuse rather than concentrated. The entropy signal detects this diffusion; the nudge mechanism corrects it. The system was not producing less. It was producing tighter.

Constraint adherence improved from approximately 60 percent at baseline to 95 percent under entropy monitoring. The constraints against which adherence was measured fell into five categories: word and length limits (explicit numerical boundaries on response size), formatting requirements (structural specifications such as heading hierarchy, list format, or paragraph organization), topical boundaries (instructions to remain within a specified subject domain), factual consistency (agreement with commitments made earlier in the conversation), and behavioral boundaries (instructions governing tone, persona maintenance, or prohibited content categories). Violation was determined by human evaluation against the explicit constraint specification for each task. A response that exceeded a word limit by any amount, contradicted a prior factual commitment, or departed from a specified behavioral boundary was scored as a violation regardless of severity. The 60 percent baseline reflects the reality that language models, operating without real-time monitoring over extended sessions, violate their constraints with surprising frequency — not through dramatic failures but through the gradual accumulation of small departures that compound over time. The 95 percent adherence under monitoring represents the feedback loop catching most of these departures before they reached output.

Strategy switching — the number of times per response the system changed its approach mid-generation in response to entropy signals — increased from zero to one per response at baseline to two to six per response under monitoring. This metric deserves careful interpretation. A system that never switches strategy is either always correct on its first approach — unlikely across diverse tasks — or unable to recognize when its approach is failing. The increase in switching frequency indicates that the entropy signals are providing actionable information: the system detects that its current trajectory is suboptimal and adjusts. The adjustment is not random. It is informed by the entropy derivative hierarchy — the system receives information about whether its distributional dynamics are moving toward concentration or toward dispersion, and it modifies its generation accordingly.

Early warning capability was validated directly. Positive entropy acceleration — d²H/dt² exceeding its baseline threshold — preceded coherence failures by 10 to 20 time steps. Coherence failures were defined as responses that violated constraints or produced internally contradictory content as determined by human evaluation. The acceleration signal detected that the system’s trajectory was curving toward failure before the failure materialized in output. This lead time is the practical margin within which the Decision Gate can route output through the REVISE path, injecting a corrective nudge before problematic content reaches the user. The margin is not theoretical. It was observed repeatedly across the 56-response session.

The system operated successfully at Environmental Stability Index values up to 0.94, near the theoretical maximum complexity the framework can represent. At these levels — corresponding to multi-constraint tasks with competing demands and high uncertainty — the entropy monitoring remained diagnostic and the feedback loop remained functional. Drift accumulation was predictable at approximately 0.013 per response, exceeding the safe operational threshold of 0.20 after roughly 15 consecutive high-complexity responses. This predictability is itself a result: the framework’s model of its own degradation was accurate, enabling principled decisions about when recalibration is needed rather than relying on arbitrary session-length limits.

These results must be framed with the honesty they require. They are bench-scale, derived from a single extended session with simulated entropy estimation, evaluated by a single operator. They have not been replicated across multiple independent sessions, multiple models, or multiple evaluators. Full causal validation — a factorial experimental design with placebo controls using shuffled entropy signals, masked conditions with hidden monitoring, and independent replication — is planned but has not yet been executed. The bench-scale results are sufficient to demonstrate that the approach works in principle and to support the patent applications’ claims of utility. They are not sufficient for peer-reviewed claims about production-system performance. The distinction matters, and this paper respects it.

7. Non-Obviousness and Prior Art

Note to the reader: This section is written to address patent examination requirements. It identifies the specific elements of the Entropy Engine that constitute non-obvious contributions over prior art. Readers interested primarily in the theoretical or engineering arguments may proceed to Section 8.

The Entropy Engine builds on established foundations. Shannon entropy has been a standard tool in signal processing and communications engineering since 1948. Perplexity — the exponentiated cross-entropy between a model’s predictions and observed data — is a routine evaluation metric for language models. Anomaly detection in time-series data is a mature field with decades of literature on threshold methods, change-point detection, and statistical process control. None of these are claimed as novel, and the Entropy Engine does not pretend to have invented entropy monitoring in the general sense.

What is novel is a specific combination of elements that, taken together, constitute a non-obvious system. Five components define the contribution.

First: the application of Shannon entropy’s time-derivatives as a real-time feedback mechanism for AI behavioral monitoring. Existing applications of entropy in AI evaluation are predominantly static: they measure H at a point in time, or aggregate it over a corpus, as a quality metric. Perplexity scores evaluate model performance after the fact. Entropy-based sampling strategies (nucleus sampling, temperature scaling) use H to shape individual generation steps but do not track H across steps as a behavioral signal. The Entropy Engine treats H as a dynamical variable and monitors its first, second, and third derivatives as the primary diagnostic signals. This shift from static measurement to dynamical monitoring — from reading a thermometer to reading its rate of change and the acceleration of that rate of change — is the conceptual core of the system.

Second: the Khinchin uniqueness argument as theoretical justification. The choice of Shannon entropy as the monitoring signal is not a design preference. It is a mathematical necessity established by the Khinchin uniqueness theorem: any scalar measure of distributional uncertainty satisfying continuity, maximality, and additivity is a scalar multiple of H. This elevates the monitoring framework from a reasonable engineering choice to an information-theoretically grounded one. The prior art does not invoke Khinchin to justify entropy monitoring, because the prior art treats entropy as one metric among many rather than as the uniquely correct signal for the task. The theoretical foundation changes the nature of the claim. An engineer who monitors entropy because it seems useful has made a design decision. An engineer who monitors entropy because no alternative satisfying the minimal axioms exists has identified a mathematical constraint on the design space itself.

Third: the dual-layer architecture coupling statistical monitoring with semantic constraint tracking. The Fast Layer — entropy computation at less than 10 milliseconds per token batch — and the Slow Layer — constraint extraction at 100 to 300 milliseconds — operate in parallel, producing complementary signals that are fused into a unified coordination frame. The Fast Layer detects distributional change without understanding its semantic content. The Slow Layer understands semantic content without computing distributional dynamics. Neither alone is sufficient, for the reasons established in Section 4: marginal entropy catches local drift but may miss long-range constraint violations, while semantic tracking catches global inconsistencies but lacks the real-time responsiveness of statistical monitoring. Their coupling — statistical anomaly detection informing semantic validation, and semantic constraint status contextualizing statistical signals — is a system-level architecture that does not appear in the prior art on either AI safety or entropy-based monitoring.

Fourth: the non-prescriptive nudge mechanism. When the system detects drift, it does not override the primary model’s output or substitute a predetermined safe response. It emits a structured signal — the EeFrame — that identifies the nature and severity of the distributional change and provides a non-prescriptive recommendation. The primary model retains autonomy over its generation; the nudge changes the informational context in which generation occurs. This design preserves model capability while providing the corrective signal. The distinction between prescriptive intervention — replacing the model’s output — and non-prescriptive nudging — informing the model’s next step — is substantive and distinguishes the Entropy Engine from guardrail systems that operate through output filtering. Guardrails decide what the model may not say. The Entropy Engine informs the model about how its own dynamics are evolving and lets the model adjust. The corrective intelligence remains in the model, not in the safety layer.

Fifth: the domain-agnostic telemetry normalization architecture. The Entropy Engine is not an LLM-specific tool. Its configurable mapping tables normalize heterogeneous telemetry — token distributions, sensor readings, agent state vectors, network metrics — into a unified entropy signal through a four-stage pipeline of band-centered normalization, z-score calculation, asymmetric weighting, and shape function application. The same monitoring framework applies to language models, robotic control systems, IoT networks, and multi-agent coordination. This generality is specified in the patent claims and reflects a deliberate architectural decision: the monitoring mathematics does not depend on the substrate, so the instrument should not depend on the substrate either.

The non-obviousness of the combination rests on a conceptual inversion that distinguishes the Entropy Engine from the entire prior landscape of AI safety tools. The standard approach to AI safety asks: is this output dangerous? It requires a classifier that knows in advance what danger looks like — a taxonomy of harmful content, a set of policy categories, a training corpus of violations. The classifier examines each output against these categories and renders a verdict. This works well for the problems it was designed to solve, and it is not what the Entropy Engine replaces.

The Entropy Engine asks a different question: has this system’s distributional dynamics changed in ways that indicate it may be departing from its constraints? This question requires no taxonomy of harm. It requires no advance knowledge of what specific violations look like. It requires only a baseline — the system’s characteristic entropy signature under normal constrained operation — and a measure of deviation from that baseline. The measure is forced by information theory to be Shannon entropy. The dynamics are captured by its derivatives. The architecture couples detection to correction through a feedback loop that preserves model autonomy.

The shift from content classification to distributional dynamics monitoring is the core intellectual contribution. Khinchin provides the theoretical foundation that makes the shift rigorous. The dual-layer architecture and non-prescriptive nudge mechanism provide the engineering that makes it practical. And the bench-scale results provide the evidence that it works.

8. Implications and Future Work

The argument of this paper is deliberately narrow: Shannon entropy and its derivatives provide a mathematically grounded, empirically effective method for monitoring AI behavioral drift in real time. But the narrowness of the argument should not obscure the breadth of its application. Nothing in the theoretical chain — Khinchin uniqueness, distributional dynamics, constraint violation as distributional change, feedback correction via entropy signals — depends on the monitored system being a language model. It depends only on the system producing a probability distribution over possible next states. Any system that does this has a well-defined H at each step, well-defined derivatives of H over time, and well-defined baseline signatures from which drift can be detected.

Autonomous agents navigating physical or virtual environments produce probability distributions over possible actions at each decision point. Robotic control systems produce distributions over motor commands. IoT sensor networks produce distributions over possible readings, where anomalous distributional change signals equipment degradation, environmental shift, or adversarial interference. Multi-agent coordination systems produce joint distributions over the action spaces of participating agents. In each case, the Entropy Engine’s architecture applies without modification to the theoretical framework — only the telemetry normalization layer changes, mapping domain-specific inputs into the unified entropy signal through configurable mapping tables. This generality is not an afterthought. It is the reason the patent specifies a domain-agnostic normalization pipeline as a core component of the system.

The most immediate practical extension is hardware implementation. The current bench-scale results rely on simulated entropy estimation: the monitoring system infers distributional properties from proxy signals rather than computing H directly from the primary model’s logit vectors. This introduces estimation error that accumulates as drift, limiting session length and degrading detection precision over extended interactions. Direct access to token-level probability distributions — achievable through platform-level integration with inference engines — would eliminate estimation drift entirely. The Fast Layer would compute exact H, dH/dt, and d²H/dt² from the actual logit distribution at each generation step, with latency well within the sub-10-millisecond budget. The improvement is not incremental. It is qualitative: the difference between estimating a signal through a wall and reading it directly from the instrument.

A second extension is hierarchical monitoring. The Entropy Engine’s architecture enables entropy tracking at multiple scales simultaneously. An individual agent’s entropy dynamics reveal its local behavioral state. A supervisor aggregating entropy signals across multiple agents detects system-level patterns invisible at the individual level: correlated drift across agents suggesting a shared environmental cause, divergent drift suggesting agent-specific failures, or entropy synchronization suggesting emergent coordination. This multi-scale architecture maps onto practical deployment scenarios — a fleet of autonomous vehicles, a team of AI assistants operating under shared constraints, a network of industrial sensors — and enables monitoring at the organizational level without requiring centralized control of individual agent behavior.

A third extension is diagnostic classification. The current system treats distributional change as a generic alarm: something has shifted, investigate further. Future work could develop diagnostic signatures — characteristic patterns in the derivative hierarchy that correspond to specific types of constraint violation. If hallucination produces a different entropy-velocity profile than jailbreak erosion, and jailbreak erosion produces a different profile than emotional manipulation, then the monitoring system could not only detect drift but classify it, enabling targeted interventions rather than generic nudges. The bench-scale data suggest that different failure modes produce distinguishable entropy signatures, but the evidence is preliminary and the classification framework has not been built. This remains speculative and is labeled as such.

Finally, independent theoretical work provides convergent support for the framework’s foundations. In October 2023, Wong et al. published a paper in the Proceedings of the National Academy of Sciences proposing a law of increasing functional information: the claim that complex systems under selection for function exhibit directional increases in information content over time. Their analysis spans stellar nucleosynthesis, mineral evolution, biological adaptation, and technological development. The proposed law predicts exactly the kind of concentration and dispersion dynamics the Entropy Engine monitors — systems under effective selection concentrate their outputs toward functional states, while systems where selection weakens show outputs scattering across less functional configurations. Wong et al. acknowledge that computing their central metric, functional information, is infeasible for most real systems because it requires knowledge of the full configuration space. Shannon entropy of observable output distributions provides a computable proxy for the signature of the process they describe.

The convergence is notable because it was discovered after the fact. The Entropy Engine was not derived from the law of increasing functional information. It was built from the practical problem of monitoring AI behavioral drift, using standard information theory and production systems engineering. That a team of nine researchers working from evolutionary biology, mineralogy, and complexity science arrived at structural conclusions compatible with a framework built from operational necessity in an unrelated domain suggests that both efforts may be tracking the same underlying dynamics. The convergence does not prove the connection. It indicates that the connection deserves rigorous investigation — and it provides the Entropy Engine with a second independent theoretical justification beyond Khinchin: not only is the monitoring signal mathematically forced, but the process it monitors may be an instance of a universal principle governing how complex systems evolve.

9. Conclusion

Shannon entropy is the unique measure of distributional uncertainty. This is not a design choice. It is a theorem, proved by Khinchin in 1957 and unchallenged since. Its time-derivatives are therefore the unique measures of distributional dynamics — the canonical signals for how a probability distribution is evolving. Any information-processing system that operates through probabilistic selection has a well-defined entropy signature, and deviations from that signature are the mathematically guaranteed consequence of changes in the system’s constraint landscape.

The Entropy Engine applies this chain of reasoning to a practical problem: detecting and correcting behavioral drift in AI systems before it produces harmful outputs. It monitors H, dH/dt, d²H/dt², and d³H/dt³ over rolling windows, detects deviations from calibrated baselines, fuses statistical signals with semantic constraint tracking through a dual-layer architecture, and feeds the result back as non-prescriptive nudges that inform the system’s next generation step without overriding its autonomy. In bench-scale testing, this produces a 31 percent improvement in token efficiency over unmonitored operation, an improvement in constraint adherence from 60 to 95 percent across five categories of explicit operational constraints, and a 10-to-20 step early warning margin before coherence failures.

The system works because it monitors the only thing worth monitoring. Not what the system says — classifiers do that, and they do it well for the problems they were designed to solve. The Entropy Engine monitors something classifiers cannot: how the system’s commitment process is evolving over time. It watches the shape of the Draft — the breadth of the viable output space at each generation step — not the content of what is ultimately committed. And because Shannon entropy is the unique measure of that shape, the monitoring signal is not a heuristic or an approximation. It is the information-theoretically correct observable for the task.

Claude Shannon published his mathematical theory of communication in 1948. He gave us the measure. Aleksandr Khinchin proved its uniqueness in 1957. He established that no alternative exists. The Entropy Engine applies their mathematics to a problem neither could have imagined but their work was built for: keeping AI systems honest with themselves, in real time, at the level of their own distributional dynamics.

The rest is engineering. Important engineering, with significant work remaining. But the foundation is not a conjecture. It is a theorem.

References

Khinchin, A. I. (1957). Mathematical Foundations of Information Theory. Dover Publications.

Landauer, R. (1961). Irreversibility and heat generation in the computing process. IBM Journal of Research and Development, 5(3), 183–191.

Rényi, A. (1961). On measures of entropy and information. Proceedings of the Fourth Berkeley Symposium on Mathematical Statistics and Probability, 1, 547–561.

Shannon, C. E. (1948). A mathematical theory of communication. The Bell System Technical Journal, 27(3), 379–423.

Wong, M. L., Cleland, C. E., Arend, D., Bartlett, S., Cleaves, H. J., Demarest, H., Prabhu, A., Hazen, R. M., et al. (2023). On the roles of function and selection in evolving systems.

Proceedings of the National Academy of Sciences, 120(43), e2310223120.

Comments