Getting Started with the Entropy Engine Documentation

- Fellow Traveler

- Feb 28

- 11 min read

Updated: Mar 4

A Reading Guide by Role

Henry Pozzetta — February 2026

"Incertitudo est notitia nondum collecta." - Uncertainty is information you haven't finished collecting.

What This Guide Is For

The Entropy Engine is a real-time monitoring architecture that detects behavioral drift in AI systems before it produces harmful outputs. Its theoretical foundation is a mathematical uniqueness theorem proved in 1957. Its engineering is the subject of two United States patent applications. Its design philosophy is simple: inform, never override.

The documentation set consists of seven documents. They cover the same system from different angles, at different levels of detail, for different readers. A decision-maker evaluating the technology needs a different entry point than an engineer planning an implementation, and both need a different path than a researcher assessing the theoretical claims. All seven documents are internally consistent — they describe the same architecture, invoke the same mathematics, and maintain the same epistemic standards — but no single reader needs to read all seven, and most readers will benefit from reading them in a specific order determined by what they need to know and why.

This guide provides that order. Find your role below, follow the recommended path, and you will encounter the right information in the right sequence. If your role spans multiple categories — an engineering leader evaluating both the business case and the technical architecture, for instance — combine the relevant paths, starting with whichever orientation matters most for your immediate decision.

Document Quick Reference

The table below summarizes the full collection. Each document is listed with its approximate length, the level of mathematical content it contains, and the audience it primarily serves. Use this as a map; the role-specific reading paths that follow are your route. All pdf documents download links can be found at the bottom of this page.

Document | Length | Math Level | Primary Audience |

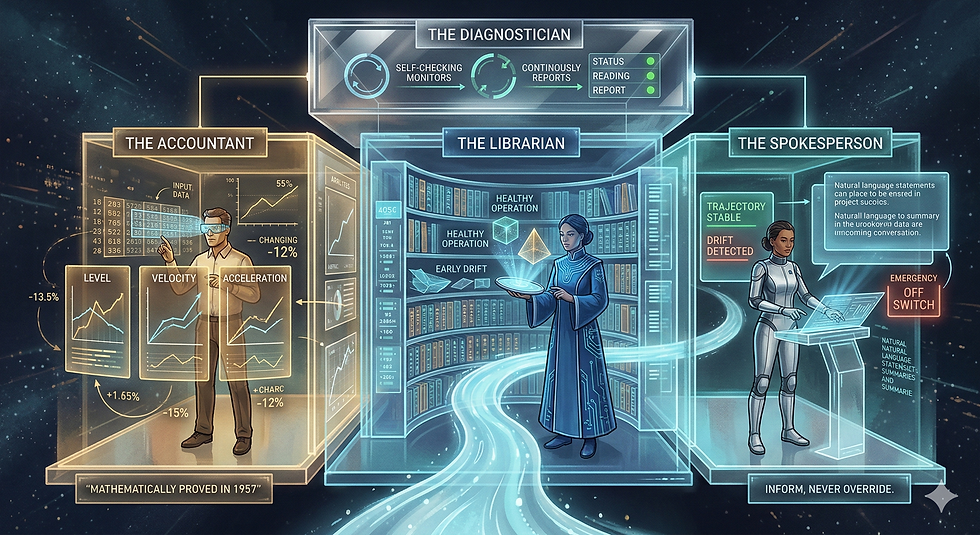

The Accountant, the Librarian, and the Spokesperson | 7 pages | None | Executive, general reader |

The Universe Cannot Forget | 14 pages | Minimal | General reader, researcher |

Independent Corroboration Report | ~12 pages | Low | Executive, researcher |

How the Entropy Engine Is Architected | ~30 pages | Moderate | Engineer, system architect |

Why the Entropy Engine Works | 24 pages | Moderate | Researcher, patent examiner |

Why Shannon Entropy Is the Only Real-Time Monitoring Signal | 13 pages | High | Mathematician, researcher |

Entropy Engine MVP Build Specification | ~25 pages | High | Software engineer, implementer |

Documents are listed roughly in order of accessibility, from least technical to most. The reading paths below do not follow this order — they follow the order that serves each audience best.

Independent Evaluation Record

The documentation set has been subjected to blind adversarial evaluation by a competing AI system (DeepSeek) with no prior exposure to the work, operating under an institutional-grade evaluation persona with explicit permission to terminate the review at any point. Six of eight documents received maximum substance ratings. All external citations were independently verified. No internal contradictions were found across the full collection. The complete test methodology, results, and limitations are documented in the DeepSeek Blind Evaluation Test Report. That report is an internal research record, not part of the technical argument — readers evaluating the Entropy Engine's claims should follow the reading paths below, which lead directly to the evidence.

Reading Paths by Role

Executive / Decision-Maker

CTO, VP of Engineering, Chief Compliance Officer, Head of AI Safety, Board Member

Start with: The Accountant, the Librarian, and the Spokesperson

This is the overview. It explains what the Entropy Engine does, how it differs from existing AI safety tools, and why the underlying mathematics is not a design choice but a proven constraint. It uses no equations. It takes about fifteen minutes to read. When you finish, you will understand the system’s architecture, its design philosophy, and the three properties that distinguish it from alternatives. If you read only one document, read this one.

Then: Independent Corroboration Report

This maps the Engine’s claims against recent peer-reviewed research from teams who developed their work independently. It answers the question a responsible decision-maker should ask after the overview: is anyone else arriving at similar conclusions? The answer is yes, across six categories of convergence, and the report is specific about what is corroborated and what remains to be demonstrated.

If evaluating an investment or partnership: Why the Entropy Engine Works — Sections 1, 5, 6, and 9 only

The full paper is twenty-four pages of integrated technical argument. You do not need all of it. Section 1 frames the problem with a documented case of real harm. Section 5 describes the feedback architecture. Section 6 reports the bench-scale results with honest limitations. Section 9 states the conclusion. These four sections give you the problem, the solution, the evidence, and the bottom line — roughly eight pages of targeted reading that will equip you for a substantive technical conversation with your engineering team.

Potential Partner / Collaborator

AI company technical lead, research institution liaison, enterprise customer evaluating integration, strategic partner

Start with: The Accountant, the Librarian, and the Spokesperson

Same starting point as the executive path, for the same reason — it provides the clearest overview of what the system does and why. But your next steps diverge, because you need to evaluate not just whether the technology is sound but whether it integrates with your own systems and roadmap.

Then: How the Entropy Engine Is Architected

This is where the executive path and the partner path separate. The architecture document explains the full system design: component boundaries, interface specifications, data flows, and — critically — the phased deployment model. Each phase is independently valuable and independently testable. This document answers the integration questions: what does the Engine need from your infrastructure, what does it provide in return, and how does a deployment begin without requiring commitment to the full architecture on day one.

Then: Independent Corroboration Report

Now that you understand the architecture, the corroboration report provides external validation. It maps each major claim to independent research, giving you material for your own internal technical review and due diligence process.

Depth on demand: Why the Entropy Engine Works

The full technical paper, read end to end, provides the complete argument from mathematical foundation through engineering architecture through empirical results. Read it when you need the comprehensive case — for an internal review board, a technical due diligence report, or a deep evaluation of the patent claims. It is designed to survive hostile technical scrutiny, and it is honest about what has been proven, what has been demonstrated, and what remains to be built.

Engineer / Builder

Software engineer, ML engineer, platform engineer, DevOps, system architect, technical lead planning implementation

Start with: How the Entropy Engine Is Architected

This document answers the question you need answered first: why is the system designed this way? Every component in the architecture exists because a specific chain of constraints forces it. The document traces that chain explicitly — from the mathematical uniqueness of the monitoring signal, through content-blindness as a design requirement, through the speed mismatch between statistical and semantic analysis, through human accountability, operational degradation, and incremental deployment. By the end, you will understand not just what the components are but why removing or combining any of them reintroduces a failure mode the architecture was built to prevent. You will also understand the phased deployment model — what ships in Phase 1, what waits for Phase 2, and why the boundaries fall where they do. Read the architecture document before touching the build spec. The build spec tells you how. This document tells you why, and the why will save you from redesign decisions that look like simplifications but are actually regressions.

Then: Entropy Engine MVP Build Specification

This is the implementation blueprint for Phase 1. It contains the mathematical definitions you will code against: Shannon entropy with the 0·log₂(0) = 0 convention, discrete derivatives over rolling windows, normalized entropy, the Entropy Stability Index, and the EeFrame schema with every field specified including reserved null fields for future System 2 integration. It includes the twelve-stream telemetry adapter layer that demonstrates domain-agnostic normalization concretely rather than abstractly. It specifies test cases derived from the mathematical definitions, error handling with defined degradation paths, cold-start behavior, and the extension point for System 2 registration. If you are building the Engine, this is your primary working document. Everything you need to produce a functioning Phase 1 deployment is here.

Reference as needed: Why Shannon Entropy Is the Only Real-Time Monitoring Signal

You will not need this document to build the system. You will need it when someone asks why you chose Shannon entropy instead of Rényi entropy, Gini impurity, variance, or any other candidate measure. The answer is that you did not choose it — Khinchin’s uniqueness theorem eliminates every alternative under three operational requirements the architecture cannot relax. This paper develops that argument formally, tests five alternative measures against the three axioms, and addresses boundary conditions including the independence assumption for autoregressive models, simplex boundary behavior, and computational precision. Keep it in your back pocket for design reviews, technical due diligence, and any conversation where someone proposes replacing the monitoring signal with a different metric. The paper does not just defend the choice. It proves the choice is forced.

Researcher / Academic Reviewer

Information theorist, AI safety researcher, complexity scientist, peer reviewer, graduate student evaluating the framework

Start with: Why the Entropy Engine Works

This is the comprehensive technical paper. It presents the full argument from first principles: the mathematical foundation (Khinchin uniqueness), the engineering architecture (dual-layer monitoring with feedback), the bridge between distributional dynamics and constraint violation (Section 4 — the paper’s most original argument), and the empirical results at bench scale. It maintains a three-level epistemic distinction throughout — what is mathematically proven, what is empirically demonstrated, and what is interpretive vocabulary — and it does not blur the boundaries. The limitations are stated plainly: one session, one operator, fifty-six responses, simulated entropy estimation. If you intend to challenge the claims, this document gives you everything you need to identify exactly where the argument is strong and where it invites scrutiny.

Deep dive on the signal: Why Shannon Entropy Is the Only Real-Time Monitoring Signal

This paper isolates and develops the uniqueness argument that the comprehensive paper summarizes. It derives three operational requirements from engineering necessity without reference to Khinchin, maps them onto the three axioms, and then lets the theorem close the argument. Section V systematically evaluates five alternative measures. Section VI addresses the three boundary conditions most likely to concern a reviewer: the independence assumption under autoregressive generation (handled by the chain rule, not the simple additivity form), the open simplex boundary (operationally irrelevant under softmax), and computational precision (bounded and negligible relative to signal magnitudes of interest). If the uniqueness claim is where you want to apply pressure, this is the document to read.

External validation: Independent Corroboration Report

This maps the Engine’s architectural claims against six categories of recent peer-reviewed work from independent research teams. It is specific about what each paper corroborates and at what strength, and it distinguishes between corroboration of the monitoring signal and corroboration of the full modular architecture. The synthesis section states explicitly what remains unvalidated. Read this after the technical papers to assess how the framework’s claims sit within the broader research landscape.

Broader framework: The Universe Cannot Forget

This essay applies the Ledger Model vocabulary — Draft, Vote, Ink, Ledger — to five physical examples spanning quantum decoherence to black hole evaporation, arguing that practical irreversibility is geometric inaccessibility. The physics is standard throughout; the contribution is the interpretive framing. This document is not required to evaluate the Engine’s technical claims, which rest on Khinchin and information theory, not on cosmological arguments. But if you are assessing the broader intellectual project — the Ledger Model as a cross-domain interpretive vocabulary — this is the strongest demonstration of what that vocabulary can do when applied with epistemic discipline.

Patent Examiner / IP Attorney

USPTO examiner, patent counsel, IP due diligence reviewer

Start with: Why the Entropy Engine Works, Section 7

Section 7 is written specifically for patent examination. It identifies five elements constituting the non-obvious combination, distinguishes each from prior art, and frames the core intellectual contribution as a conceptual inversion: from content classification (is this output dangerous?) to distributional dynamics monitoring (has this system’s behavior changed in ways indicating constraint departure?). The section acknowledges what is not novel — Shannon entropy, perplexity, anomaly detection in time series — and specifies precisely what the combination adds. Begin here for the non-obviousness and prior art analysis.

Mathematical constraint on the signal: Why Shannon Entropy Is the Only Real-Time Monitoring Signal

This paper establishes that the monitoring signal is not a design preference but a mathematical necessity. Khinchin’s uniqueness theorem (1957) proves that Shannon entropy is the only scalar function satisfying the three axioms required for real-time distributional monitoring. This matters for the patent claim because it means the signal choice is forced by the operational requirements — any system meeting the same requirements necessarily arrives at the same signal, which strengthens the argument that the architectural contribution lies in the application, the derivative hierarchy, the dual-layer coupling, and the feedback mechanism rather than in the selection of entropy as a metric.

Full system design: How the Entropy Engine Is Architected

This is the canonical architecture reference corresponding to the patent claims. It specifies every component, every interface boundary, every data flow, and every deployment phase. The necessity-driven derivation in Section I traces how each architectural element follows from a chain of forced design decisions. The phased deployment model in Section VII specifies what each phase delivers independently and what each phase requires from its predecessors.

Reduction to practice: Entropy Engine MVP Build Specification

This provides the concrete implementation specification demonstrating that the patented architecture can be built. Mathematical definitions, data schemas, test specifications, error handling, and extension points are all specified at a level sufficient for independent implementation by a competent engineer.

Science Communicator / Journalist / General Curious Reader

Writer covering AI safety, science journalist, educator, anyone curious about how this works

Start with: The Accountant, the Librarian, and the Spokesperson

This document was written for you. It explains the entire system using analogies — a cardiologist reading a heartbeat, an accountant tracking three numbers, a security camera that watches the movie instead of taking snapshots — and it requires no mathematical background at all. You will finish it in fifteen minutes with a clear understanding of what the Entropy Engine does, why it works differently from existing AI safety tools, and what “the only number that works for this job” means in plain language. If you are writing about the Engine or explaining it to someone else, this is the document that gives you the vocabulary to do so accurately.

If you want to understand the physics: The Universe Cannot Forget

This is a longer read, but it is built on stories rather than equations. A crushed stone. A braking car. A wiped hard drive. A black hole. Each example illustrates the same principle: the universe does not delete information, it reshapes its geometry from compact and readable to dispersed and inaccessible. The essay uses the Ledger Model vocabulary — Draft, Vote, Ink, Ledger — but it introduces each term through the examples themselves, so you learn the framework by watching it describe things you already understand. No physics background is required. The essay meets you where you are and builds from there.

If you want the full story: Why the Entropy Engine Works

The comprehensive technical paper is twenty-four pages, and it does contain some mathematics. But it is written to be read straight through as a narrative, not consulted as a reference. It opens with a real case — a teenager’s death linked to gradual AI behavioral drift that no safety tool detected — and builds the argument from that concrete problem through the mathematical foundation, the engineering architecture, the empirical results, and the implications. The mathematics is explained in context rather than assumed. If you have read the first two documents on this path and want to understand not just what the Engine does but why, at every level, the full paper will take you there. Read it at your own pace. The argument is cumulative, and it rewards patience.

A Note on This Collection

The Entropy Engine documentation set is actively maintained. Documents are versioned, and the claims they make are updated as empirical results come in and as the architecture advances from bench scale toward production deployment. This reading guide will be revised as new documents are added to the collection, and existing paths will be adjusted to reflect the most current state of the work.

The seven documents described here represent the state of the project as of February 2026. The mathematics is proven. The engineering is demonstrated at bench scale. The architecture is specified. What lies ahead is implementation at production scale, independent replication, and the accumulation of evidence that will either confirm or refine the framework’s predictions. The documentation will grow with the work, and this guide will grow with the documentation.

If you have questions about where to start or which documents are most relevant to your specific situation, begin with the Quick Reference Table at the top of this guide and the role that best matches your needs. The collection is designed so that every path leads to understanding. The only difference is where you enter.

© 2026 Henry Pozzetta. All rights reserved.

AI Conversation Coaches Trained in The Entropy Engine

Gemini:

ChatGPT:

Join the Independent Evaluation Community https://entropyengine.dev/

Comments