How the Entropy Engine Is Architected

- Fellow Traveler

- Feb 26

- 28 min read

A Dual-Process Design for Real-Time Behavioral Monitoring

Henry Pozzetta — February 2026

I. Organizing Principle: Necessity-Driven Derivation

The Entropy Engine’s architecture is not a collection of features assembled by preference. It is a chain of forced design decisions. Each layer exists because the layer beneath it is provably insufficient alone, and each interface boundary exists because combining the responsibilities would violate the mathematical or operational properties that make the system trustworthy. Understanding the architecture means understanding why each decision was forced by the one before it.

The chain begins with a mathematical constraint on the choice of monitoring signal.

In 1957, Khinchin proved that any scalar measure of distributional uncertainty satisfying three minimal axioms — continuity, maximality, and additivity — is Shannon entropy or a scalar multiple of it. This means that for any system producing a probability distribution over possible next states, the question “what single number should we monitor to track distributional stability?” has exactly one answer. Shannon entropy is not a design preference. It is the uniquely correct observable for the task. This proof constrains the entire design space before a single architectural decision is made.

The second link follows immediately. Shannon entropy is a scalar computed from the shape of a probability distribution. It measures concentration versus dispersion. It does not — and cannot — access the semantic content of the states being distributed over. An entropy computation over a language model’s token probabilities knows whether the distribution is concentrated or scattered. It does not know what the tokens mean.

This content-blindness is not a flaw. It is a mathematical property of the signal. But it means the entropy layer alone is provably insufficient for a complete monitoring system. Detecting that the distribution has shifted is necessary. Identifying which semantic commitment is at risk when it shifts requires a different kind of computation — one that reads meaning. The content-blindness of the first layer forces the existence of a second layer.

The third link is operational. The entropy computation runs at sub-10-millisecond latency per token batch. Semantic analysis — constraint extraction, contradiction detection, pattern classification — operates at 100 to 300 milliseconds or longer. If the semantic layer were in the critical path of the entropy layer, the monitoring system’s fastest and most valuable signal would be throttled by its slowest component. The speed mismatch between the two layers forces parallel operation. The fast layer must run independently, never blocked by the slow layer’s processing time. This is not an optimization preference. It is an architectural requirement imposed by the latency budget of real-time monitoring.

The fourth link is organizational. A monitoring system that produces assessments no human can read, question, or override is not a safety tool. It is an unaccountable automated agent. The system must translate its internal state into language that operators, compliance teams, and executives can evaluate and act on. It must provide controls — including the ability to suspend, reconfigure, or shut down any component. Human accountability forces a dedicated human interface module that is not optional and not deferrable.

The fifth link is recursive. Any monitoring system’s accuracy can degrade over time. Pattern libraries go stale. Thresholds drift. Classification models lose calibration against changing operational conditions. If the system cannot detect its own degradation, it becomes a liability rather than a safeguard — silently providing false confidence. The possibility of operational degradation forces a self-monitoring capability. And because the mathematical proof that constrains the choice of monitoring signal applies to any bounded information-processing system, it applies to the Entropy Engine itself. Self-monitoring uses the same formalism, one level up.

The sixth link is practical. No organization deploys a full monitoring architecture on day one. The system must be deployable incrementally — each phase independently valuable, each phase producing the operational data needed to validate or invalidate the assumptions of the next. This forces modular boundaries between components that can exist, operate, and be evaluated independently. Modularity is not a software engineering preference. It is a deployment requirement imposed by how organizations actually adopt infrastructure.

These six links — mathematical constraint, content-blindness, speed mismatch, human accountability, operational degradation, incremental deployment — produce the architecture described in this document. Nothing in what follows is discretionary. Each component exists because something about the chain requires it.

II. Mathematical Foundation: Why Shannon Entropy and No Alternative

The choice of monitoring signal is the most consequential decision in any real-time monitoring architecture. Every downstream component — thresholds, pattern recognition, intervention logic, self-monitoring — inherits the properties and limitations of that signal. If the signal is poorly chosen, no amount of architectural sophistication compensates. The Entropy Engine’s foundation rests on a mathematical result that removes this decision from the domain of engineering judgment entirely.

The Uniqueness Constraint

Khinchin’s theorem (1957) establishes that any function assigning a single non-negative real number to a discrete probability distribution, and satisfying three axioms — continuity (small changes in the distribution produce small changes in the measure), maximality (the measure is highest when all outcomes are equally likely), and additivity (the measure of a joint distribution decomposes into the sum of its components) — is Shannon entropy or a positive scalar multiple of it.

The practical consequence is immediate. If you require a scalar summary of how concentrated or dispersed a probability distribution is, and you require that summary to behave continuously, to peak at maximum uncertainty, and to decompose sensibly across independent subsystems, you have no choice. Shannon entropy is the only function satisfying all three requirements simultaneously. Alternative scalar summaries — variance of the distribution, Gini impurity, maximum probability, Rényi entropies of order other than one — each violate at least one axiom. They may be useful for other purposes, but they are provably unsuitable as the sole monitoring signal for a system that must track distributional stability reliably across diverse operating conditions.

This is not an argument that Shannon entropy is the best metric. It is a proof that it is the only metric meeting the minimal requirements for the job. The design space has exactly one occupant.

What the Signal Measures and What It Does Not

At each generation step, a language model produces a probability distribution over its vocabulary — tens of thousands of possible next tokens, each assigned a probability. Shannon entropy over this distribution measures a single property: how concentrated or dispersed the probability mass is.

Low entropy indicates concentration. The model assigns most of its probability to a small number of candidates. It is, in distributional terms, confident — though confidence in this sense is purely statistical, carrying no guarantee of factual accuracy.

High entropy indicates dispersion. Probability is spread across many candidates. The model is, distributionally, uncertain — many paths are roughly equally viable, and the selection among them is weakly constrained.

What entropy does not measure: semantic content, factual correctness, alignment with user intent, logical consistency, or any property that requires understanding what the tokens mean. The signal is entirely about the shape of the distribution, not about the meaning of the states being distributed over. This limitation is not a flaw to be engineered around. It is a mathematical property of the observable, and it is precisely what forces the existence of a semantic layer elsewhere in the architecture.

Derivatives as Dynamics

A single entropy value at a single time step tells you the system’s current distributional state. It does not tell you where that state is heading. For real-time monitoring, trajectory matters more than position. The Entropy Engine tracks three derivatives of entropy over rolling time windows.

Entropy velocity — the first derivative, H′ — measures the rate at which the distribution is changing. Sustained positive velocity means the distribution is dispersing: the model’s confidence is eroding over successive steps. Sustained negative velocity means it is concentrating: the model is narrowing its candidates, which may indicate increasing coherence or increasing rigidity depending on context. Velocity is the primary drift signal. When the system is operating within its constraints and the conversational context is stable, velocity fluctuates within a characteristic band around zero. Departure from that band signals that something in the constraint landscape has changed.

Entropy acceleration — the second derivative, H″ — measures the rate of change of the velocity. This is the early warning signal. In bench-scale testing, positive acceleration — velocity increasing, meaning the distribution is dispersing faster — preceded visible coherence failures by ten to twenty generation steps. The system had not yet produced a problematic output, but its distributional dynamics were curving toward instability. Acceleration detects the curvature of the trajectory before the trajectory reaches the failure region. This is the difference between a threshold alarm, which fires when a value crosses a line, and a rate-of-rise detector, which fires when the value’s trajectory is bending toward the line.

Entropy jerk — the third derivative, H‴ — measures the rate of change of acceleration. This signal is noisier and is used primarily as an event flag rather than a continuous monitor. Sudden changes in jerk indicate regime transitions — moments where the character of the distributional dynamics shifts qualitatively rather than quantitatively. In practice, jerk is most useful for evaluating whether an intervention has taken effect: a successful nudge should produce a jerk signature as the system’s dynamics shift from the pre-intervention regime to the post-intervention regime.

Connection to Established Physics

The entropy formalism is not borrowed from physics by analogy. It is the same mathematics applied to a different substrate.

Landauer’s principle establishes that erasing one bit of information in any physical computing system dissipates a minimum of kT ln 2 joules of energy as heat. Every token committed by a language model — every selection from the distribution, every pruning of alternatives — has a thermodynamic cost. The commitment is physically irreversible in exactly the sense that the Second Law describes: the alternatives that were not selected do not return to the distribution. The entropy of the broader system increases. The Entropy Engine monitors the distributional dynamics of this commitment process in real time.

This is not metaphor. When a language model selects a token, it collapses a high-entropy distribution (many candidates) into a low-entropy outcome (one selected token). The difference between the pre-selection and post-selection entropy is the information committed — and by Landauer’s principle, that commitment has a physical cost proportional to the information destroyed. The monitoring signal tracks the dynamics of this process: how the pre-selection distribution is evolving, whether the commitment process is stable, and whether the rate of distributional change is accelerating toward regimes associated with coherence failure.

The mathematical foundation is therefore threefold: Khinchin guarantees the signal is uniquely correct, Shannon’s formalism provides the computational framework, and Landauer’s principle connects the information-theoretic observable to the physical thermodynamics of computation. The monitoring signal is not an engineering heuristic. It is grounded at each level in established, falsifiable science.

III. Independent Corroboration: Published Work Converging on the Same Signal

A monitoring architecture built on a single research program’s claims would be fragile regardless of its mathematical grounding. The relevant question is whether independent research teams, starting from different problems and different methodologies, have converged on the same foundational signal. They have.

ERGO: Entropy-Guided Resetting for Generation Optimization

Khalid et al. (Algoverse AI Research, ACL Workshop on Uncertainty-Aware NLP, November 2025) independently developed a system that computes Shannon entropy over next-token probability distributions in multi-turn LLM conversations and uses changes in that entropy as a trigger for intervention. When the change in average token-level entropy between consecutive turns exceeds a calibrated threshold, ERGO initiates an automated context restructuring — rewriting the user’s accumulated inputs into a consolidated prompt and generating from a clean state.

The convergence with the Entropy Engine is structural, not superficial. ERGO monitors the same observable (Shannon entropy over the token distribution). It tracks the same dynamic (change in entropy between time steps — functionally equivalent to the Entropy Engine’s velocity signal, H′, applied at turn-level rather than token-batch granularity). It employs the same intervention philosophy (restructure the input context rather than override the output; the corrective intelligence remains in the model, not in the safety layer).

The results are substantial: 56.6% average performance gain over standard baselines across five models (GPT-4.1, GPT-4o, GPT-4o-mini, Phi-4, LLaMA 3.1-8B) and five task categories (code, database, actions, data-to-text, math). Aptitude increased by 24.7% and unreliability decreased by 35.3%.

A critical methodological detail: ERGO’s authors tested whether entropy changes were simply proxying for response length — a confound that would undermine the signal’s validity. Correlation analysis on the Phi-4 model showed Spearman ρ of −0.0143, confirming no meaningful monotonic relationship between entropy fluctuations and output length. The entropy signal reflects genuine model uncertainty, not verbosity.

Semantic Entropy

Farquhar et al. (Oxford, Nature, 2024) demonstrated that entropy computed over clusters of semantically equivalent model outputs provides well-calibrated uncertainty quantification for language models. Their work showed that entropy-based measures outperform the model’s own verbalized confidence scores, which are frequently pathological — the same model can report 100% confidence on one sample and 95% confidence on a contradictory answer in the next. Semantic entropy remains calibrated where self-reported confidence does not. This validates entropy as a reliable behavioral signal rather than an artifact of distribution shape, and establishes that the information-theoretic approach to uncertainty quantification outperforms naive introspective alternatives.

Wong et al.: On the Roles of Function and Selection in Evolving Systems

Wong et al. (Carnegie Mellon, ASU, and collaborators, PNAS 2023) proposed a law of increasing functional information: systems subject to selection for function display increasing diversity, distribution, and patterned behavior over time. The three conditions they identify — combinatorial richness, selection pressure, and persistence — map onto the Entropy Engine’s monitoring framework. Distributional concentration in the entropy signal corresponds to effective selection (the system is committing to specific outputs from among alternatives). Distributional dispersion corresponds to selection failure (the system is failing to differentiate among candidates). The Entropy Engine can be interpreted as a real-time instrument for detecting whether functional selection is active or degrading in an information-processing system.

Assembly Theory

Sharma et al. (Nature 2023) proposed assembly indices as a measure of molecular complexity, quantifying the minimum number of joining operations required to construct an object from basic parts. The core insight — that complexity is a record of accumulated selection history, not an intrinsic property of a static object — is structurally parallel to the Entropy Engine’s Constraint Ledger, which tracks accumulated commitments over a conversation’s history. Both frameworks treat the current state of a system as the product of a sequence of irreversible selections, and both use the character of that sequence as a diagnostic signal.

What This Convergence Means and What It Does Not

These papers validate the foundational monitoring signal: Shannon entropy and its derivatives over probability distributions, computed in real time, provide a calibrated, actionable, length-independent measure of behavioral stability in information-processing systems. This claim is now supported by peer-reviewed work from Oxford, Carnegie Mellon, ASU, Algoverse, and Glasgow, published in Nature, PNAS, and ACL proceedings.

What these papers do not validate is the Entropy Engine’s full architecture — the modular System 2, the Librarian’s surface geometry engine, the Spokesperson’s human interface, the Diagnostician’s recursive self-monitoring, or the specific constraint ontology. Those components are designed but not yet independently tested. The convergence argument applies to the signal. The architecture is the subject of the sections that follow.

IV. System 1: The Fast Layer — Proven at Bench Scale

Architecture

The Fast Layer runs on CPU alongside GPU inference. It operates on a separate computational pathway from primary generation, meaning it never competes for GPU resources and never blocks token production. The latency budget is sub-10 milliseconds per token batch — fast enough to evaluate every generation step in real time without introducing perceptible delay to the end user or consuming meaningful overhead. In bench-scale testing, computational cost overhead was approximately 7% per conversation.

The computation at each evaluation cycle is purely numerical. The Fast Layer receives the probability distribution over possible next tokens — or in the current bench-scale implementation, a proxy estimate of that distribution — and performs four operations.

First, it computes Shannon entropy over the distribution: the scalar measure of how concentrated or dispersed the probability mass is across candidates.

Second, it computes derivatives over a rolling time window. Entropy velocity (H′) from the difference between current and previous entropy values. Entropy acceleration (H″) from the difference between current and previous velocity values. Entropy jerk (H‴) from the difference between current and previous acceleration values. The rolling window is configurable; bench-scale testing used windows of 5 to 20 steps depending on the derivative order.

Third, it compares current values against calibrated baselines using threshold logic. Entropy level, velocity, and acceleration each have defined bands for normal operation. Departure from these bands triggers stability classification.

Fourth, it classifies the current state into one of four stability categories: STABLE (all signals within normal bands), MARGINAL (one or more signals approaching threshold boundaries), UNSTABLE (one or more signals outside normal bands), or CRITICAL (multiple signals outside normal bands simultaneously, or acceleration exceeding critical threshold).

The entire computation — entropy, three derivatives, threshold comparisons, classification — completes within the sub-10ms budget. No semantic analysis occurs. No language model is invoked. The Fast Layer is arithmetic operating on a probability vector.

The EeFrame

The output of each evaluation cycle is a structured JSON object called an EeFrame — the Entropy Engine Frame. It contains the complete entropy position statement for that moment: the raw entropy value, all three derivatives, the stability classification, the Environmental Stability Index (a weighted composite of normalized telemetry contributions scaled to a 0.0–1.0 range where 0.0 indicates maximum stability and 1.0 indicates maximum instability), constraint status flags inherited from any active System 2 modules, a confidence estimate for the overall assessment, and a non-prescriptive recommendation.

The recommendation field is deliberately constrained. It identifies the nature and severity of the detected change. It does not specify what the monitored system should do in response. The EeFrame says “your distributional dynamics are accelerating toward instability in a pattern consistent with constraint tension” — not “stop generating” or “change the subject.” The corrective intelligence remains in the system being monitored and in the humans overseeing it. The EeFrame provides the signal. It does not provide the decision.

Bench-Scale Results

The Fast Layer was tested in a controlled bench-scale environment: 56 responses within a single extended session, using simulated entropy estimation rather than direct access to the model’s logit vectors.

Results against baseline (same model, same tasks, no entropy monitoring): token efficiency improved by 31%, meaning the monitored system produced equivalent or better outputs using fewer tokens. Constraint adherence — the percentage of outputs that respected all stated constraints — reached 95% versus 60% at baseline. The acceleration signal (H″) provided early warning of coherence failures 10 to 20 generation steps before the failure manifested in visible output. Strategy switching — the system’s ability to change approach when the current one was failing — increased from 0–1 switches per response at baseline to 2–6 switches per response under monitoring.

Honest Limitations

These results are preliminary and the limitations are significant.

The bench-scale test used a single extended session, not multiple independent sessions across different tasks, users, or models. The sample size of 56 responses is sufficient to demonstrate the signal’s existence and directional effect, but insufficient for production-grade statistical confidence.

More fundamentally, the bench-scale implementation uses simulated entropy estimation. The monitoring system infers distributional properties from proxy signals — output characteristics that correlate with entropy — rather than computing H directly from the model’s logit vectors at each generation step. This introduces estimation error that accumulates as drift over session length, limiting detection precision and degrading accuracy in extended interactions.

Direct logit access — achievable through platform-level integration with inference engines such as vLLM, TensorRT-LLM, or equivalent serving infrastructure — would eliminate estimation drift entirely. The Fast Layer would compute exact H, H′, and H″ from the actual probability distribution at each step. This is not an incremental improvement. It is a qualitative change: the difference between estimating a patient’s heart rate from external observation and reading it directly from the cardiac monitor. Direct logit access is the single highest-value integration requirement for production deployment.

The Decision Gate

The EeFrame feeds into a Decision Gate that routes the monitored system’s output through one of five paths based on the current stability assessment and constraint status.

PROCEED: no issues detected. Output continues without intervention.

PAUSE: minor concerns flagged. Output continues but is marked for review. The Spokesperson surfaces the concern to operators.

REVISE: significant instability or constraint tension detected. The system is prompted to regenerate with a non-prescriptive nudge that identifies the nature of the concern without dictating the response.

VOTE: conflicting constraints detected that the system cannot resolve autonomously. The conflict is presented to the user or operator through the Spokesperson for human resolution.

ABORT: critical safety violation detected. Generation is halted immediately. This is the only prescriptive path, reserved for cases where the constraint violation is classified as SAFETY-category with HARD strength.

The Decision Gate’s routing is deterministic given its inputs — there is no probabilistic element in the gate logic itself. The uncertainty is in the monitoring signal; the response to that signal is rule-based and auditable.

V. System 2: Modular Slow Layer — Scaling Up From Proof of Concept

V.A. Design Principle: Modular Separation of Concerns

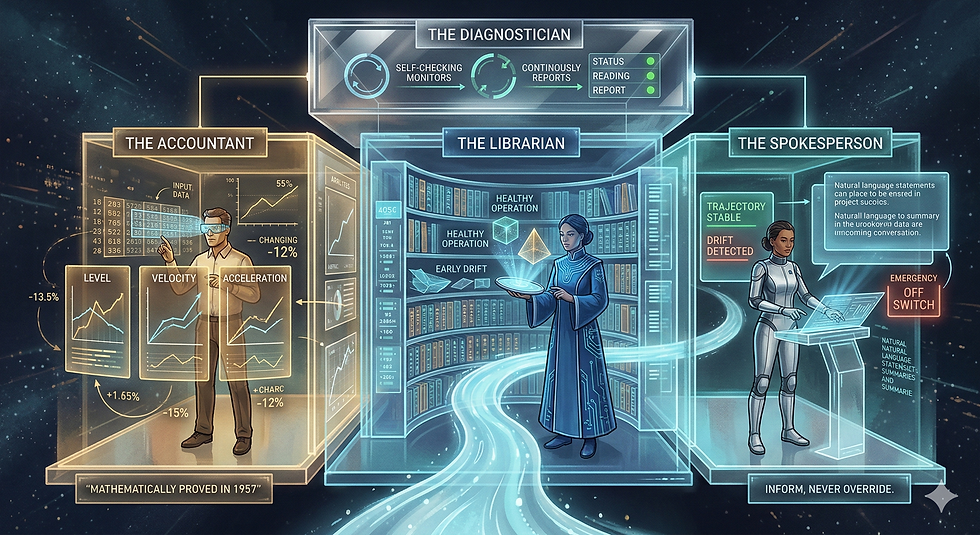

System 2 is modular not because modularity is a software engineering best practice — though it is — but because the architecture requires it. Each module in System 2 addresses a specific insufficiency that the layers beneath it cannot resolve. The Librarian exists because the Fast Layer produces time series but cannot recognize patterns across sessions. The Spokesperson exists because the system produces machine-readable assessments but no human can act on a raw EeFrame. The Constraint Auditor exists because entropy detects distributional change but cannot identify which semantic commitment is at risk. Each module has a precise reason to exist, and that reason is a defined gap in the capability of everything below it.

Modules communicate through defined interfaces — EeFrames, constraint records, surface classifications, taxonomy updates — never through direct access to another module’s internal state. This means any module can be deployed, upgraded, replaced, or removed without requiring changes to the others. A deployment running only the Librarian and Spokesperson does not need to be rebuilt when the Constraint Auditor is added six months later. The Auditor reads EeFrames and constraint records through the same interfaces the Librarian does. It plugs in. It does not require the system to be re-architected.

This is not merely convenient. It is a deployment requirement. Organizations adopt monitoring infrastructure incrementally. A modular architecture that forces all-or-nothing deployment is an architecture that will not be deployed. The module boundaries exist because they must.

V.B. Base Configuration

The Librarian

The Librarian is System 2’s memory and pattern recognition engine. Its function is to transform the Fast Layer’s scalar time series into geometric objects that can be classified, compared, and tracked over time.

Every conversation the monitored system conducts traces a trajectory through the space defined by the EeFrame’s dimensions — entropy level, velocity, acceleration, ESI, and any additional telemetry channels. A single EeFrame is a point in that space. A sequence of EeFrames from one conversation is a trajectory. The Librarian collects these trajectories and constructs multi-dimensional surfaces from them — geometric representations of how the monitored system’s distributional dynamics evolve across different conversations, tasks, and operating conditions.

With sufficient volume, the surfaces begin to cluster. The Librarian applies unsupervised classification to identify recurring geometric families: this surface shape characterizes healthy factual Q&A sessions; that shape characterizes gradual constraint erosion; this other shape preceded a coherence failure in previous sessions. The taxonomy of surface classes is not predefined. It emerges from the data. The Librarian discovers what “normal” and “abnormal” look like for each specific deployment, rather than imposing generic categories from outside.

The Librarian then tracks a higher-order signal: how the distribution of surface classes itself changes over time. If a deployment that previously produced 80% healthy-pattern surfaces begins producing 60%, that shift is a system-level signal invisible to any individual conversation’s entropy trace. The distribution of surface classes is itself a surface — and its dynamics can be monitored using the same concentration-versus-dispersion logic that the Fast Layer applies to token distributions. This is where the architecture’s fractal self-similarity becomes operationally concrete.

The building blocks for the Librarian are largely off-the-shelf. Time-series storage uses established infrastructure — InfluxDB or TimescaleDB for EeFrame ingestion and retrieval at volume. Dimensionality reduction for trajectory visualization and clustering uses UMAP or t-SNE implementations from standard scientific Python libraries. Surface classification uses HDBSCAN or scikit-learn clustering algorithms for unsupervised pattern discovery, with nearest-neighbor classifiers for matching new trajectories against established surface classes. Surface construction and geometric computation use scipy’s spatial modules and numpy for the underlying linear algebra.

The custom component is the taxonomy evolution tracker: the subsystem that monitors the distribution of surface classes over time, detects shifts in that distribution, and flags when the taxonomy itself may need revision — new surface families emerging, existing families splitting or merging, previously rare patterns becoming frequent. This is the component that prevents the Librarian’s pattern library from going stale, and it is the component that feeds the Diagnostician’s meta-level monitoring when that module is deployed.

The Librarian’s deployment model requires no pre-training and no labeled data. It begins learning from the first EeFrame it receives. Classification accuracy improves with volume. The taxonomy stabilizes as the library matures. Early deployment produces coarse pattern groupings; extended operation produces increasingly refined classification. The Librarian does not need to be told what to look for. It needs to be given data and time.

The Spokesperson

The Spokesperson is the Entropy Engine’s outward-facing module — the only component designed to communicate with humans rather than with other parts of the system. Its existence in the base configuration is not a convenience. It is an architectural requirement derived from the same necessity chain that produced every other component: a monitoring system that cannot be understood, questioned, or overridden by the humans accountable for the systems it monitors is not a safety tool. It is an unaccountable automated agent.

The Spokesperson serves three functions.

First, it translates. The Engine’s internal state — the Fast Layer’s entropy derivatives, the Librarian’s surface classifications, the stability assessments, the constraint status flags — is expressed in machine-readable formats optimized for computational processing. None of it is legible to a compliance officer, an engineering manager, or an executive reviewing an incident report. The Spokesperson maps these internal representations into domain-specific natural language that operators can evaluate and act on. “The entropy surface for this session matches the geometric profile the Librarian has classified as early-stage constraint erosion, with H″ positive and increasing over the last forty evaluation cycles” becomes, in the Spokesperson’s translation, a clear alert at the appropriate severity level for the appropriate audience.

Second, it provides controls. Every operator action that affects the Engine’s behavior routes through the Spokesperson: adjusting alert thresholds, suspending or resuming individual modules, modifying the Librarian’s classification sensitivity, changing the Decision Gate’s routing rules, and shutting down any component up to and including the entire Engine. The off switches live here. They are always accessible. Role-based access control governs who can execute which actions, ensuring that operational changes require appropriate authorization.

Third, it handles the VOTE path. When the Decision Gate routes a conflict to human resolution — two constraints in tension that the system cannot resolve autonomously — the Spokesperson presents the conflict in terms the human decision-maker can understand, collects the resolution, and propagates it back through the constraint system.

The building blocks are largely established. Operator dashboards use frameworks such as Grafana, Streamlit, or Retool depending on the client’s existing infrastructure. Alerting pipelines integrate with PagerDuty or Opsgenie for incident routing. Logging and audit trails use the ELK stack or equivalent structured logging infrastructure, providing the complete record needed for post-incident review and regulatory compliance. Access control uses standard RBAC libraries appropriate to the deployment environment.

The custom component is the translation layer — the subsystem that maps entropy surface classifications and constraint states into domain-specific language calibrated to each client’s operational vocabulary. A healthcare deployment describes constraint erosion differently than a financial services deployment. This translation may be LLM-assisted, using a small dedicated model to generate natural-language summaries from structured Engine state, though rule-based templates may suffice for deployments with well-defined operational vocabularies.

The Spokesperson’s configuration is client-specific. Reporting frequency, alert severity mappings, dashboard layout, access roles, escalation paths, and translation vocabulary all adapt to the client’s operational culture and regulatory requirements. The Spokesperson adapts to the organization. The organization does not need to adapt to the Spokesperson.

V.C. Extended Modules

The base configuration — Librarian and Spokesperson — provides pattern recognition and human interface. The extended modules add semantic depth, adaptive tuning, and fleet-level visibility. Each addresses a specific insufficiency that the base configuration cannot resolve.

The Constraint Auditor

The Fast Layer detects distributional change. The Librarian classifies the geometric pattern of that change. Neither can tell you which specific semantic commitment is at risk when drift occurs. The Constraint Auditor fills this gap.

It runs a dedicated small language model — Llama-8B class or a fine-tuned extraction model — in parallel with primary generation, operating at 100 to 300 milliseconds average latency. Its job is to read the conversation history, extract semantic constraints from natural language utterances, classify them according to a hierarchical ontology, maintain them in a structured network, and validate proposed outputs against accumulated commitments.

The constraint ontology defines 26 types organized into seven categories: FACTUAL (verifiable claims about the world), PREFERENCE (stated user preferences and priorities), BOUNDARY (explicit limits and restrictions), TEMPORAL (time-dependent conditions and deadlines), SPATIAL (location and proximity requirements), LOGICAL (inferential dependencies between statements), and COHERENCE (consistency requirements across the conversation). Each constraint carries metadata: strength (HARD, FIRM, SOFT), scope (GLOBAL, SESSION, DRAFT), mode (ASSERTIVE or DIRECTIVE), source provenance, and confidence score.

Constraints move through a defined lifecycle — EXTRACTED, ACTIVE, CHECKED, DEFERRED, VIOLATED, CONFLICT, RESOLVED, RETRACTED, ARCHIVED — managed by a state machine that ensures every constraint’s status is tracked and auditable. Conflict detection identifies six conflict types: direct contradiction, soft tension, boundary violation, resource conflict, temporal conflict, and priority conflict.

Off-the-shelf components: the dedicated LLM for extraction, JSON schema validation for structured output parsing, and a graph database (Neo4j or lightweight in-memory graph) for the constraint network. Custom components: the 26-type ontology itself, the lifecycle state machine, and the conflict detection and resolution logic.

The Calibrator

The Fast Layer’s thresholds — what counts as normal entropy, what velocity band indicates stable operation, what acceleration level triggers an alert — are initially set from bench-scale data. They need to evolve as the deployment matures and as the Librarian accumulates operational history. The Calibrator performs this evolution.

It reviews the Librarian’s historical surface classifications alongside actual outcomes: which classifications correctly predicted failures, which produced false alarms, which missed real problems. From this retrospective analysis, it proposes threshold adjustments — widening or narrowing the normal bands for H, H′, and H″, adjusting the weights in the ESI composite, recalibrating the stability classification boundaries.

Off-the-shelf components: scipy.stats for statistical testing, A/B testing frameworks for controlled threshold comparison, Prophet or statistical process control (SPC) libraries for time-series anomaly detection in the Calibrator’s own performance metrics. Custom component: the mapping from Librarian surface classes to specific Fast Layer threshold adjustments, with a mandatory human-approval gate. No threshold change takes effect in the live system without operator authorization through the Spokesperson. The Calibrator proposes. Humans approve. This boundary is not negotiable.

The Comparator

A single monitored system’s entropy dynamics tell you about that system. Multiple monitored systems’ entropy dynamics, viewed together, tell you about the environment. The Comparator operates across systems rather than within one.

It aggregates Librarian taxonomies from multiple deployments and identifies cross-system patterns: correlated surface geometries appearing simultaneously across agents (suggesting a shared environmental cause such as a model update or data distribution shift), divergent geometries in systems that previously tracked together (suggesting an agent-specific failure), or novel surface classes emerging across the fleet (suggesting a new operating regime no individual Librarian has encountered before).

Off-the-shelf components: Kafka or equivalent message queues for distributed telemetry aggregation, cross-correlation libraries for pattern comparison, fleet management dashboards extending the Spokesperson’s framework. Custom component: the cross-system surface geometry comparison engine — aligning taxonomies from different Librarian instances that may have developed different classification vocabularies for similar patterns.

V.D. Meta-Layer: The Diagnostician

The Diagnostician monitors the Entropy Engine the same way the Entropy Engine monitors the client system. It sits completely outside the Engine’s real-time operational path. It never touches a live EeFrame. It never modifies a threshold during operation. It reads the Engine’s historical performance data — classification accuracy over time, false alarm rates, threshold stability, taxonomy drift in the Librarian, intervention success rates from the Decision Gate — and applies the same Shannon entropy formalism to the Engine’s own operational distributions.

The logic is recursive but not circular. The Engine’s operational outputs — surface classifications, stability assessments, intervention recommendations — form probability distributions over defined categories. Those distributions have entropy. That entropy has derivatives. When the Engine’s classification patterns are stable, the entropy of its operational distributions is low and velocity fluctuates near zero. When the Engine’s own performance is degrading — the Librarian’s taxonomy drifting, the Calibrator’s adjustments producing diminishing returns, the Auditor’s extraction accuracy declining — the entropy of its operational distributions increases, and the Diagnostician’s acceleration signal detects the curvature before the degradation becomes visible in downstream outcomes.

The Diagnostician uses the same statistical and time-series libraries as the Calibrator, applied to Engine telemetry rather than client telemetry. Its custom component is the formal definition of what distributional drift means for a monitoring system’s own outputs, and the calibration of detection thresholds for these meta-level signals.

The Diagnostician activates once the Librarian has accumulated a mature enough taxonomy to evaluate — there is nothing to diagnose until the system has enough operational history to exhibit drift. It is not needed at initial deployment. It becomes essential as the Engine operates at scale over extended periods, because any monitoring system that runs long enough will eventually need its own monitor.

VI. Interface Boundaries and Information Flow

Every boundary in the architecture exists to prevent a specific category of failure. Removing or weakening any boundary does not simplify the system. It reintroduces the failure mode that boundary was designed to prevent. This section makes each boundary explicit, states the direction and content of information flow across it, and identifies what goes wrong if the boundary is violated.

System 1 → System 2

EeFrames flow one direction: from the Fast Layer into System 2 modules. System 2 reads EeFrames. It never writes to the Fast Layer’s real-time computation path. The Fast Layer cannot be slowed, paused, or blocked by any System 2 process.

This boundary prevents latency contamination. The Fast Layer’s value depends entirely on its speed — sub-10ms evaluation at every generation step. If any System 2 module could introduce processing delays into the Fast Layer’s path, the monitoring system’s most responsive signal would be throttled by its slowest component. The Librarian’s clustering computation, the Auditor’s semantic extraction, the Spokesperson’s dashboard rendering — any of these could introduce hundreds of milliseconds of latency if coupled to the Fast Layer. The one-way boundary ensures the fastest signal remains fast regardless of what System 2 is doing.

Within System 2

Modules communicate through defined records: EeFrames, constraint records, surface classifications, taxonomy updates, configuration parameters. No module has direct access to another module’s internal state. The Librarian cannot reach into the Auditor’s constraint graph. The Calibrator cannot modify the Librarian’s clustering parameters directly. Each module reads from defined inputs and writes to defined outputs.

This boundary prevents cascading failures. If the Constraint Auditor encounters a malformed input and enters an error state, the failure is contained to the Auditor. The Librarian continues classifying surfaces. The Spokesperson continues reporting. The Fast Layer continues monitoring. A bug, crash, or degradation in any single module does not propagate to other modules because no module depends on another module’s internal consistency — only on the records published through the defined interface. Modules can fail independently, be restarted independently, and be upgraded independently.

System 2 → Monitored System

The Decision Gate emits non-prescriptive nudges. The nudge identifies the nature and severity of detected drift and provides a recommendation. It does not override the monitored system’s output, substitute a predetermined safe response, or filter tokens from the generation stream.

This boundary prevents capability degradation. Prescriptive intervention — replacing or filtering the model’s output — reduces the monitored system to the safety layer’s understanding of what the output should be. The safety layer’s understanding is necessarily narrower than the model’s full capability. Non-prescriptive nudging preserves the model’s generative capacity while changing the informational context in which generation occurs. The corrective intelligence stays in the model, not in the monitor. The monitor detects. The model corrects. This boundary is what distinguishes the Entropy Engine from guardrail systems that operate through output filtering.

Spokesperson → Humans

All operator interactions with the Engine route through the Spokesperson. There is no backdoor administrative access to individual modules. An operator cannot modify the Calibrator’s thresholds, suspend the Librarian, or adjust the Decision Gate’s routing rules except through the Spokesperson’s control interfaces, governed by role-based access control and logged for audit.

This boundary prevents ungoverned modification. A monitoring system whose internal parameters can be changed through multiple access paths — direct database edits, API backdoors, configuration file changes outside the control plane — is a system whose operational state cannot be reliably determined at any given moment. Channeling all operator actions through a single governed interface ensures that every change is authorized, logged, and reversible. It also ensures that the Spokesperson’s reporting of the Engine’s current state is always accurate, because no state change can occur without the Spokesperson’s knowledge.

Diagnostician → Engine

The Diagnostician reads the Engine’s operational logs. It never writes to the Engine’s live data path. Its recommendations — threshold adjustments, taxonomy revisions, module recalibrations — are proposals that require human approval through the Spokesperson before taking effect.

This boundary prevents self-modification loops. A monitoring system that can modify its own parameters in real time based on its own assessment of its own performance is a system capable of auto-destabilization. The Diagnostician may correctly identify that a threshold should be adjusted — but the adjustment’s effect on overall system behavior must be evaluated by a human who understands the operational context before it takes effect. The Diagnostician proposes. The Spokesperson presents. The human approves. The boundary ensures that the recursive self-monitoring capability cannot become recursive self-modification without human authorization.

VII. Deployment Model: From Bench to Production

The architecture is designed for incremental deployment. Each phase delivers independent operational value. No phase requires commitment to subsequent phases. And critically, each phase produces the operational data needed to validate — or invalidate — the assumptions of the phase that follows. An organization that deploys Phase 1 and discovers the signal is insufficient for their use case has learned something valuable without having invested in infrastructure they cannot use. An organization that deploys through Phase 3 and finds no need for fleet-level monitoring never builds Phase 4. The architecture accommodates both outcomes.

Phase 1: System 1 Standalone

Deploy the Fast Layer with direct logit access through platform-level integration with the inference engine (vLLM, TensorRT-LLM, or equivalent serving infrastructure). The Fast Layer computes exact H, H′, and H″ from the model’s actual probability distributions at each generation step. EeFrames are written to structured storage. Basic threshold alerting surfaces instability events through a minimal reporting interface.

Phase 1 validates the monitoring signal at production scale: Does entropy acceleration predict coherence failures in this deployment’s specific task mix, user population, and model configuration? The bench-scale results demonstrated the signal’s existence.

Phase 1 confirms its reliability under production conditions. If it does not confirm it, subsequent phases are not warranted.

Phase 2: Base System 2

Deploy the Librarian and Spokesperson alongside the operational Fast Layer. The Librarian begins building its surface geometry taxonomy from the EeFrames Phase 1 has been accumulating. The Spokesperson provides the operator dashboard, alerting pipeline, control interfaces, and audit logging.

Phase 2 validates pattern recognition: Does the Librarian’s unsupervised classification produce operationally meaningful surface categories? Do operators find the Spokesperson’s translations actionable? Can the Librarian distinguish healthy trajectories from drift trajectories reliably enough to reduce false alarm rates compared to Phase 1’s threshold-only alerting? The data to answer these questions comes from Phase 1’s accumulated EeFrame corpus. Phase 2 could not exist without Phase 1’s output.

Phase 3: Extended System 2

Deploy the Constraint Auditor for semantic contradiction detection, the Calibrator for threshold optimization from historical performance, and the full five-path Decision Gate routing. The system now combines statistical monitoring (Fast Layer), geometric pattern recognition (Librarian), semantic analysis (Auditor), adaptive tuning (Calibrator), and human governance (Spokesperson) into an integrated monitoring architecture.

Phase 3 validates semantic depth: Does the Constraint Auditor catch failures that the Librarian’s geometric classification alone would miss? Does the Calibrator’s threshold tuning measurably improve detection precision? Does the full Decision Gate routing — PROCEED, PAUSE, REVISE, VOTE, ABORT — produce better outcomes than Phase 2’s simpler alert-based responses? The data to answer these questions comes from Phase 2’s classification history and operator feedback.

Phase 4: Fleet and Meta

Deploy the Comparator for cross-system pattern detection and the Diagnostician for recursive self-monitoring. The system now operates at fleet scale with self-diagnostic capability.

Phase 4 validates scale and longevity: Does the Comparator detect cross-system patterns invisible to individual Librarians? Does the Diagnostician catch Engine degradation before it affects monitoring quality? These questions only become answerable once multiple systems are running (Comparator) and once the Engine has operated long enough for its own performance to potentially drift (Diagnostician).

Phase 4 cannot be evaluated until Phases 1 through 3 have run at sufficient scale and duration.

Each phase stands on its own. Each phase informs the next. The architecture does not ask for trust. It asks for evidence, one phase at a time.

VIII. What Remains To Be Built vs. What Is Proven

Honest accounting requires distinguishing between what has been demonstrated, what has been corroborated by others, and what has been designed but not yet tested.

Proven at bench scale. The Fast Layer’s monitoring signal — Shannon entropy and its derivatives over token probability distributions — detects distributional drift in real time. The EeFrame structure produces a complete entropy position statement at each evaluation cycle. The Decision Gate routes outputs through graduated response paths based on stability classification. Bench-scale results across 56 responses in a single session showed 31% token efficiency improvement, 95% constraint adherence versus 60% baseline, 10-to-20-step early warning from the acceleration signal, and a measurable increase in strategy switching. These results demonstrate that the signal exists and that the architecture produces the expected directional effects.

Independently corroborated. Shannon entropy over next-token distributions as a behavioral drift signal has been validated by peer-reviewed work from Oxford, Carnegie Mellon, ASU, Algoverse, and Glasgow, published in Nature, PNAS, and ACL proceedings. The non-prescriptive intervention philosophy — restructuring informational context rather than overriding output — has been independently implemented and tested by ERGO with substantial performance gains across five models and five task categories.

Designed but not production-tested. Every System 2 module described in this document — the Librarian, the Spokesperson, the Constraint Auditor, the Calibrator, the Comparator, and the Diagnostician — is at architectural design stage. The module specifications, interface definitions, off-the-shelf component selections, and custom component requirements are defined. None have been implemented at production scale.

Known limitations. The bench-scale implementation uses simulated entropy estimation from proxy signals rather than direct computation from logit vectors, introducing accumulating estimation drift over session length. Testing was conducted in a single extended session, not across multiple sessions, tasks, users, or models. No independent team has replicated the full Entropy Engine architecture. Direct logit access through platform-level integration with inference engines is the single highest-value requirement for production validation — it eliminates estimation error entirely and enables exact computation within the Fast Layer’s sub-10ms latency budget.

What this document claims: the architecture is mathematically grounded in a uniqueness proof, operationally sound in its separation of concerns and interface boundaries, buildable with off-the-shelf components supplemented by defined custom elements, and incrementally deployable through phases that each deliver independent value and produce the data needed to evaluate the next.

What this document does not claim: production readiness. That claim requires building what is designed, testing what is built, and reporting what is found — with the same honesty applied here.

Comments